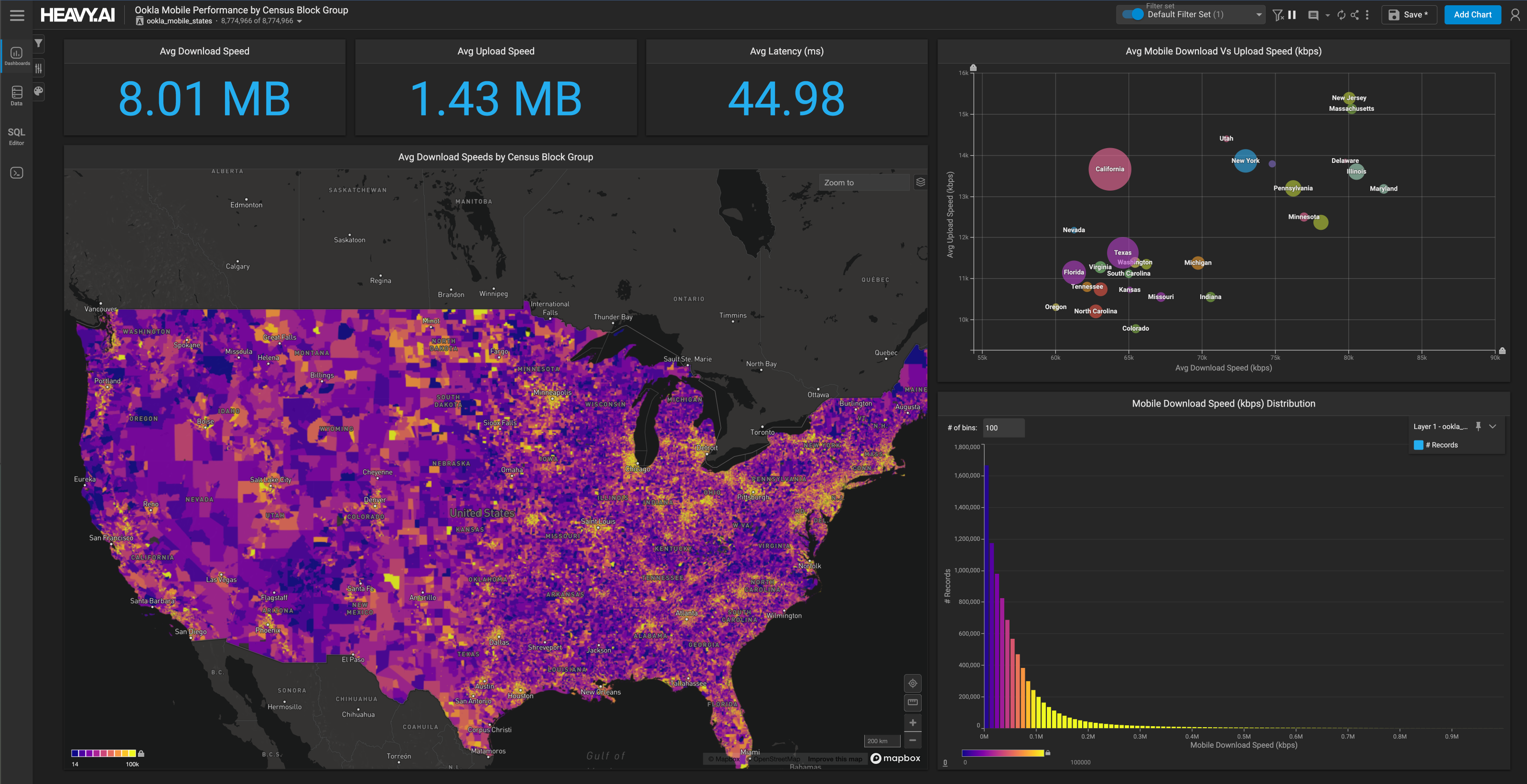

Handy Code Snippets to Improve your Data Science Project Workflow in HEAVY.AI

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSE

Data science projects often require a mix of python scripts, SQL queries, bash commands, Docker CLI commands, and terminal code snippets. This is the nature of data cleaning, ETL-ing, munging, analyzing data, and maintaining the various data science workflow tools and libraries we use along the way.

Our team keeps a variety of these code snippets handy since we use them all the time. We ran an internal survey to gather these one-liners in one place, and we compiled them into this post so that you can use them in your data science project workflow too!

Connecting to your HEAVY.AI server

We regularly use ssh (Secure Shell) to connect to HEAVY.AI instances hosted on our internal servers or cloud instances. This command allows you to use your security key and ssh into the server where your HEAVY.AI instance lives. This is the starting point for most data science workflow configuration and troubleshooting. The -i flag stands for identity file, and all ssh flag options are documented here.

Copying files into or from your HEAVY.AI server

We use SCP (secure copy protocol) to copy files to and from remote instances. The command works similarly to the previous ssh command, except SCP requires the file path of the file you'll send over the wire and its destination path.

SCP works great in many cases but, rsync is faster and generally better for moving files. Rsync picks up where you left off should something happen to your file transfer midway through (e.g., your local machine goes to sleep).

The command below might look confusing, but it's fairly straightforward. The -av flag uses (-a) archive mode and (-v) verbose. Archive mode includes a variety of flags (like -r for recursively going through a directory) and is one of the most commonly used flags. Verbose tells us which files will be moved and a summary of the transferred data once it's finished. Progress is self-explanatory and gives us a nice percentage counter as a large file gets moved. Finally, the -e option of rsync specifies the remote ssh shell for data transfer. This allows us to use ssh and rsync together since we'll need a private key to access many servers.

We covered SCP in depth in How to Load Large CSVs into HEAVY.AI using SCP and COPY FROM.

Review GPU Performance and Clear GPU Memory

GPUs are a big part of what enables HEAVY.AI to be so blazingly fast. Our hardware reference page has a nice image that shows how the HEAVY.AI system stores data on SSH disk by default but then loads some data into GPU memory for querying, data exploration and visualization in dashboards.

nvidia-smi is a helpful command for assessing GPU performance and available space on a system. Should the GPUs be out of space, running alter system clear gpu memory from the SQL Editor will clear them up.

Tail the logs

System commands and performance is kept in the log files on a server running HEAVY.AI. 'Tailing the logs' is a way to read off the end of the log files and stream the log data into your terminal. The -f (follow) flag allows for streaming the log files.

The above command requires you to be in the logs directory to run it, while the following command will grab the most recent logs when you're not in the log directory.

Tailing the logs is often necessary for troubleshooting issues to improve data science workflow efficiency.

Add a unique id column

Occasionally, you might load a data table into HEAVY.AI without a unique id column. This makes queries with joins or group bys much more difficult, if not impossible. An ALTER and UPDATE statement will enable you to assign the hidden table rowid as a unique identifier column.

Docker Containers

We often install HEAVY.AI on Docker, making maintenance and updates for your data science project workflow more clear. This section covers a few of the common one-liners we use with Docker. Note that many of these tips assume the use of Docker configured with an omnisciserver and omnisciweb container and mapping /omnisci-storage within the container to /var/lib/omnisci/omnisci-storage on the host. This is how our HEAVY.AI Amazon Machine Image (AMI) offering is configured.

View Running Docker Containers

This command is a straightforward method for listing all of your containers.

Restarting your Docker Containers

It's sometimes necessary to restart your Docker containers should you have a process hang or crash. This command will restart the HEAVY.AI server and web server containers.

Access OmniSQL through the container

Usually, you'd use OmniSQL from within the SQL Editor tab in Immerse. But you can also access OmniSQL from the command line by using Docker exec to enter the omnisciserver container.

What are your go-to snippets for data science project workflows?

Share your favorite data science project workflow snippets with us on LinkedIn, Twitter, or our Community Forums.