Announcing OmniSci 5.0

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSENearly a month ago, in October, we proudly hosted our first ever user conference: Converge. With over 275 attendees and nearly 50 sessions, it was a great event for us to meet with and learn from our customers and community about how they’re using OmniSci to help them understand their businesses, their communities, or indeed, the universe at large, by getting answers from their largest datasets at the speed of curiosity.

Part of what made Converge exciting (not to mention intense!) for us is that we got to announce OmniSci 5.0, which showcased several marquee capabilities in the platform, and more broadly, set the stage for where it is headed. Last week, in a culmination of over 4 months of engineering effort, 5.0 became generally available. With the holiday break, we’ve finally had time to catch our collective breaths and also dive in deeper into this release and point out all the amazing work that our engineering teams have done, not all of which we had time or space to show off at Converge.

What is OmniSci 5.0 about?

Let’s step back a bit before we dive in. As the press release around OmniSci 5.0 pointed out, there were some key themes that we set out to address.

First, it is clear that a generational transition is underway in the world of data, enabled by Artificial Intelligence (AI/ML). Until now, OmniSci has been unparalleled at allowing users (aka Human Intelligence) to quickly identify and react to patterns and anomalies in data by breaking free of the scale constraints of current generation visual analytics tools. While we pride ourselves at OmniSci on being able to keep up with the speed of human curiosity, it’s clear to us that the next frontier is in showing our users where to look in a sea of data. This is where Machine Learning is no longer a choice, but a necessity for any kind of analytics, regardless of neat categories to date like ‘diagnostic’ of ‘predictive’ or ‘descriptive’. With OmniSci 5.0, we laid the building blocks to enable Machine Intelligence pervasively and transparently within our platform. Our ultimate goal is to leverage all the power of the same modern hardware that AI already depends on, to provide nothing less than an exoskeleton for the analytical mind.

Next, the world of data is far from monochromatic or one-dimensional. It is easy to develop ‘shallow’ understanding from data using the average BI workflow today, i.e, getting answers to a set of canned questions. Getting insights from data isn’t just about looking deeply at a single dataset, but being able to incorporate a variety of different perspectives. In OmniSci 5.0, we launched some key features that build on the theme of Data Fusion, that will allow users to find and integrate external reference datasets to develop a broader and deeper understanding of their own data.

Finally, the foundation of any of OmniSci’s value to date is (and continues to be) performance at scale. Like the story about engineers at Google that had t-shirts saying ‘Fast is my favorite feature’, we know that a user doing anything in OmniSci expects it to happen within human interactive timescales, typically tens of milliseconds. As our feature surface grows, it’s a major goal for us to keep it that way, and continue to exploit advances in modern hardware in order to do so. Likewise, none of this matters without baseline level of robustness and stability. While there is always do more in this regard, we really care about being both effortless and reliable - as you can see from the sheer number of bug fixes that made it in to 5.0.

Let’s dive in to the key features in OmniSci 5.0 and how they relate to the above themes.

Groups of interesting things, and what they did in space and time - Cohort analysis and the new Filter experience

If you’ve seen OmniSci immerse in action, you’ll know that nothing captures the OmniSci gestalt better than fluidly moving around billions of data points in space with our geospatial charts combined with time zoom capability on the combination bar/line chart (aka ‘Combo’), and crossfilter capabilities. On large datasets, this interactivity allows users to quickly spot trends and anomalies visually. Here’s a simple example of how an entire story plays out in data - the grounding of 737 Max 8 planes as seen in crowdsourced data about ADS-B transmissions.

.gif)

This is powerful by itself, but the natural next question becomes about ‘following the story’. Data scientists are typically interested in understanding not just overall trends in behavior of people or things (‘entities’), but specific, well-defined subgroups of these entities. These entities could be moving both in space and time. For example, a data scientist or analyst studying users on a website, might narrow in to a specific group of users on a given day as a group of interest. On the other hand, someone studying air traffic patterns with a view to better planning flight paths within the US airspace, might care about set of planes moving through a particular region of the airspace on a given day. Yet another example is a data scientist at a retailer studying a particular demographic group of interest, with a view to modeling propensity of that group responding to marketing campaigns for a particular brand of soap.

These are all examples of what is commonly called ‘cohort analysis’, which roughly follows the path of first defining a group of entities, and then using this group to anchor further analysis. A very typical question in cohort analysis is along the lines of ‘What did the entities identified by <a collection of filters> at time t0, do at time t1’. Now, cohort analysis has been done before, typically in the context of web analytics. Building on this basic idea, Cohort analysis in OmniSci takes it to a whole new level. When you can do this interactively at scale, especially on spatiotemporal data, it opens up a completely different paradigm of data exploration. For example, here is a visual cohort definition on nearly 1.5 billion rows of publicly available spatiotemporal data from the San Francisco Muni authority, where we are interested in the trajectories of specific buses that crossed the Golden Gate Bridge.

.gif)

Another key feature underpinning the power of cohort analysis (and complementing it) is the new filter UX in OmniSci 5.0. Oftentimes, users wish to set up filters on different subsets of their data, and be able to view the same dashboard through applying different filters. In OmniSci 5.0, we overhauled the basic global filter UI of earlier versions into a much more powerful and flexible experience. Users can now define and save a set of filters on different fields in the dataset, and switch back and forth between these sets easily.

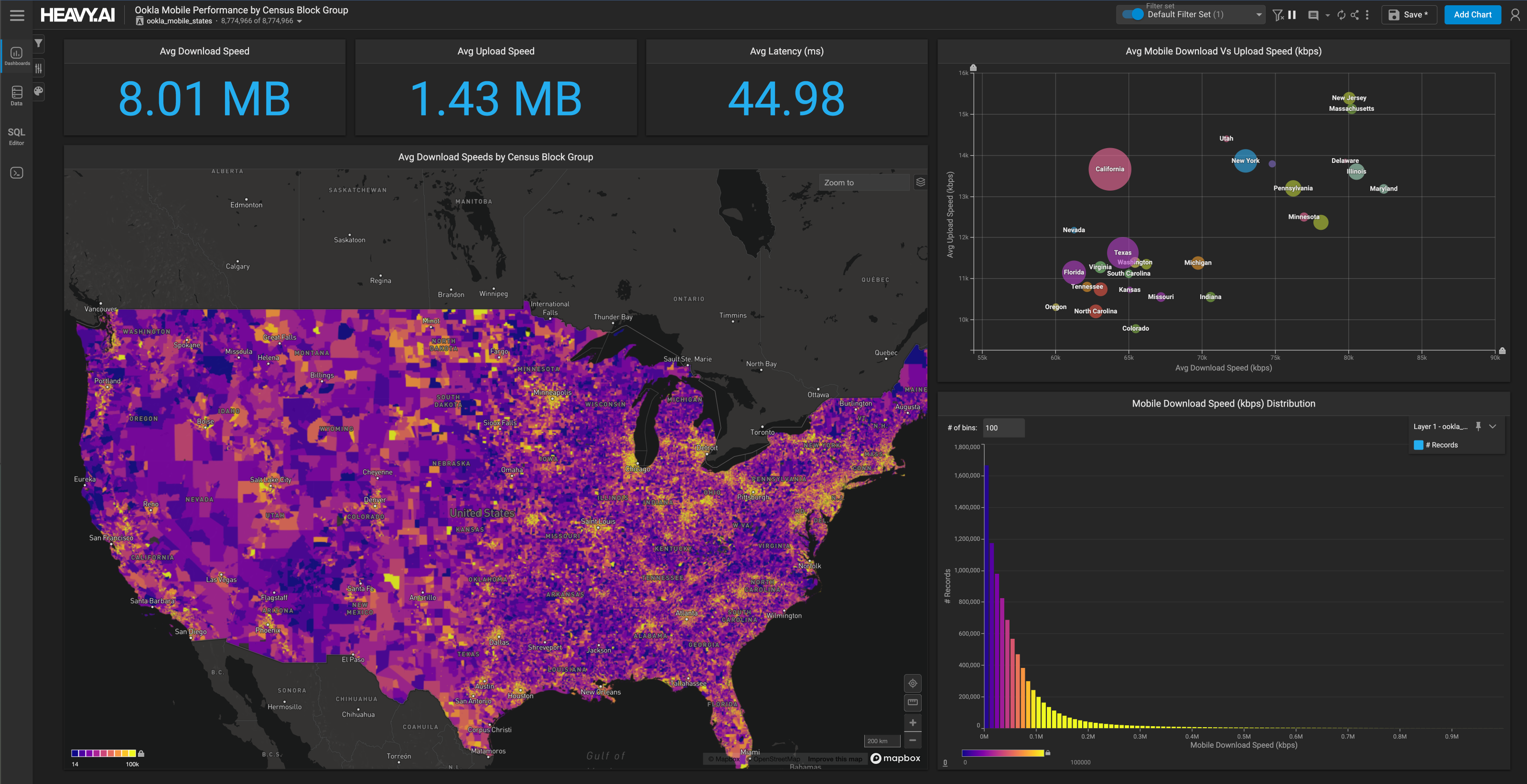

Here’s a great example of this being used on a 3.4 billion row Call Quality Dataset from our valued partner Tutela, where we’ve created a dashboard on different call quality metrics, but with a filter set based on carrier name (it could be any combination of filters, really). Watch how easy it is to switch between 3 different carriers and compare their performance. As an aside, this is running on some powerful new hardware (Optane persistent memory) from Intel, with whom we announced a new strategic partnership to broaden OmniSci’s reach. We’ll be talking about this partnership a lot in the coming weeks, and have several exciting developments in store.

The concept really comes in handy when defining and using cohorts, which naturally map to the idea of filter sets (after all, a cohort is really a filter set on a group of entities). We also integrated the two UIs in a way that cohorts can be saved and applied as filter sets, as well as being able to incorporate the same filter selection UI into the cohort builder workflow. A big shoutout to our design team for their work here.

Filter sets and cohorts are both capabilities that take some learning. We are excited to roll this out and happy to listen to any feedback on how it can be made more useful. We’ll continue to iterate on this and improve the experience in future releases.

A matter of (many) perspectives - Immerse Data Catalog

The most active users of OmniSci tend to use it for discovery. Unlike with traditional Business Intelligence tools like Tableau, Spotfire, and QlikView, which force users into a narrow set of canned insights, users tend to use OmniSci to explore their data both broadly and deeply within a typically very large dataset, starting with large-scale patterns and then progressively zooming in (in every sense of the word) into much more granular trends and insights at different scales of space and time.

However, this is just the tip of the proverbial iceberg. One staggering aspect of the world of data today is the sheer number of valuable publicly available datasets that could provide any analysis in any domain with additional context and perspective. For reference datasets alone, you can find online every single building footprint in the United States, datasets from OpenStreetMap of every mapped road anywhere in the world, very large datasets in Systems Biology like UniProt, and even a catalog of every star in our immediate galactic neighborhood. Beyond this, there are tremendously valuable sources of observational data like the recently released ARCOS dataset, or weather data from NOAA NEXRAD 2, not to mention granular transportation datasets like ADS-B, AIS (for shipping). There is a large, rapidly developing industry around curating and publishing these datasets - either as paid commercial offerings or on public repositories like AWS S3.

Meanwhile within the last year, OmniSci added a set of capabilities we’ve called Visual Data Fusion (VDF). Since OmniSci 4.7, we allow for both geospatial charts and time series charts to add new data sources without requiring them to be explicitly joined via SQL. Naturally, VDF opens up the possibility of integrating relevant internal and external datasets into your analysis in a frictionless way. With OmniSci 5.0, we have taken further steps towards making it even more powerful, with the experimental launch of our Data Catalog.

To begin with, our narrow goal with the Data Catalog is for OmniSci to provide a handful of curated public datasets that our users find generally useful in their workflows, such as geographic boundaries and census/demographic information, using a public S3 bucket. To see the catalog in action, watch Rachel Wang’s terrific demo of it during the Converge product keynote.

Looking ahead, we want the data catalog to be a lightweight mechanism to disseminate the results of analysis both within and outside an organization. To further this, we also announced a new binary export/restore format in OmniSciDB, that allows users to quickly export a compressed, binary representation of a table in OmniSci

DUMP TABLE tweets TO '/opt/archive/tweetsBackup.gz' WITH (COMPRESSION='lz4|');

and restore it to a potentially different OmniSci instance.

RESTORE TABLE tweets FROM ‘/opt/archive/tweetsBackup.gz’ WITH (COMPRESSION=’lz4’);

This capability is currently available on the omnisql command line, but our goal is to allow for users to save/export tables from Immerse.

In 5.0, the data catalog is an experimental feature that you can turn on and off via a configuration setting. We’re working on several fronts, from improving the underlying infrastructure for the catalog itself, to allow it to be deployed as a standalone service inside a firewall as a way to share and analyses in a seamless manner, and also the collection of curated datasets we will make available to all OmniSci users - both public and those from our growing set of partners.

More than just SQL - setting the foundation for ML/AI with User-Defined Functions

With OmniSci 4.8, we announced the OmniSci Data Science foundation. Our first goal was to converge the traditionally disconnected ‘Business Analyst’ and ‘Data Scientist’ workflows on a common platform and accelerate them. We did this by taking care to fit in to the tools and ecosystem that Data Scientists are most familiar with - the PyData stack. We delivered deep integrations with JupyterLab, the next-generation UI for Jupyter, and also a complete exploratory workflow combining a Pandas-like API (Ibis) with interactive data visualization from the Altair project.

As powerful as these capabilities are proving to be, they are only part of the overall picture. A domain expert user, say in Retail or Telco is not a data scientist. For instance, a user looking at count of store visits on a time series may notice a spike on a chart, and quickly want to diagnose why the spike happened. This involves 2 distinct problems, neither of which are easy to do with normal SQL - first, detecting an anomaly reliably, and second (perhaps more valuable), identifying the specific combination of other attributes in the data that correlate (and perhaps explain) the spike. Another interesting example is in identifying spatiotemporal ‘co-travelers’ based on their paths (trajectories), using traditional clustering techniques.

With OmniSci 5.0, we’ve set the foundation for incorporating these types of advanced analytic methods, including Machine Learning, into the heart of the query/visualization workflow, with the launch of our User Defined Functions framework. The UDF framework is a general mechanism to incorporate external functions into the query execution path - except that in OmniSci’s case, we built a way to integrate this with our query compiler/execution infrastructure.

The framework, which is currently in experimental form, permits Row-wise UDFs with scalar as well as array/variable length inputs, and as of 5.0, table-valued functions (UDTFs) which take a query result set as input, and produce a table-valued output in turn. We also allow for UDFs/UDTFs to be written in both C++ and Python - the latter in collaboration with our friends at Quansight where we built a remote compiler for LLVM to enable udf code written in Python/Numba to be JIT compiled and executed as part of a SQL query. Here’s an example of this whole workflow in action with a simple UDF.

While UDFs and UDTFs may appear to be low-level features, they open up the door to incorporating machine learning in a pervasive way across the OmniSci platform, while staying close to the metal on the query execution path. Over the next several releases, we plan to deliver integrated workflows embedding common machine learning methods, as well as publishing a whole series of deeper dives into using it to extend OmniSci in powerful ways. Our goal is to allow a whole host of accelerated ML methods to be usable in a truly interactive setting including integration with OmniSci Render.

More speed of thought, less interruptions - a new architecture for High Availability and major performance and stability improvements

As we’ve started to see customers deploy OmniSci in the most demanding mission-critical situations, we realized we needed to overhaul our HA architecture to be more efficient, particularly in how we use GPU infrastructure. With OmniSci 5.0, we have done so, and we continue to work on this capability in the next few versions, pushing towards the goal of a k-safety based HA architecture with optimal recovery time and reducing the need for standby infrastructure.

OmniSci 5.0 is not just about the above features, though. Throughout the leadup to 5.0, the OmniSciDB team landed foundational performance improvements in several areas of the execution engine. Taken together, these improvements result in up to an 8x query performance increase for certain classes of aggregate queries. We also improved the way Immerse sends queries to OmniSciDB, resulting in further performance gains - and our engineering team did all this while adding the powerful new capabilities earlier.

As I mentioned earlier, none of these exciting new features in OmniSci 5.0 would matter if we did not continually push towards greater stability and reliability with each release. In 5.0, we put on our best exterminator outfit and fixed nearly 200 bugs large and small, making it even more stable and reliable in addition to the performance improvements above.

Speed at any scale - some closing thoughts

OmniSci 5.0 represents a capstone to a year full of amazing product-related achievements for our engineering team - whether it was uncovering our dark side in GTC, going to Jupyter or zooming around in space and time.

In closing, I’ll leave you with a teaser for what’s in store next year as we push to make OmniSci available to everyone to answer big questions at the speed of curiosity. Here is what Todd was cooking up during Thanksgiving. OmniSci is capable of scaling down as well as it scales up or out. Stay tuned for our plans in this direction in the upcoming months!

We’re excited to make 5.0 available to our customers and user community. For new users, OmniSci 5.0 can be accessed through a variety of ways: Download a pre-built version of our Open Source edition, sign up for our 30-day Enterprise Trial on our downloads page, or get instant access with a free 14-day trial by signing up for OmniSci cloud.

On your mac installing the OmniSci Open Source edition is as simple as

conda install -c conda-forge omnscidb-cpu.

If you have a linux machine with a GPU, you can do this via

conda install -c conda-forge omnscidb-gpu

As always, we look forward to your valuable feedback - thank you!