World's Fastest Analytics

Key Features

The world's leading accelerated database, for everyone from startups to global enterprises.

Native SQL Engine

Natively supports standard SQL, only hundreds of times faster than CPU-only platforms.

Learn More

Native SQL Engine

HeavyDB natively supports standard SQL, so that analysts and data scientists can use their existing SQL skills while taking advantage of performance up to a hundred times faster than CPU-only data warehouses.

HeavyDB can operate as a standalone SQL engine or be used to power the HeavyImmerse visual analytics component of the HEAVY.AI platform for interactive visualization of your data.

Additionally, HeavyDB can be used to accelerate third party BI engines like Tableau, and data scientists can use the Python connector with native support for Apache Arrow to performantly interact with the system from notebooks and custom apps.

Fast Geospatial Operations

Native support for Open Geospatial Consortium (OGC) data types with GPU-accelerated geospatial operator support.

Learn More

Fast Geospatial Operations

HeavyDB can store and query spatial data using Open Geospatial Consortium (OGC) types and functions. With fast geospatial join support, HeavyDB is the ideal platform for scalable, interactive spatiotemporal analytics.

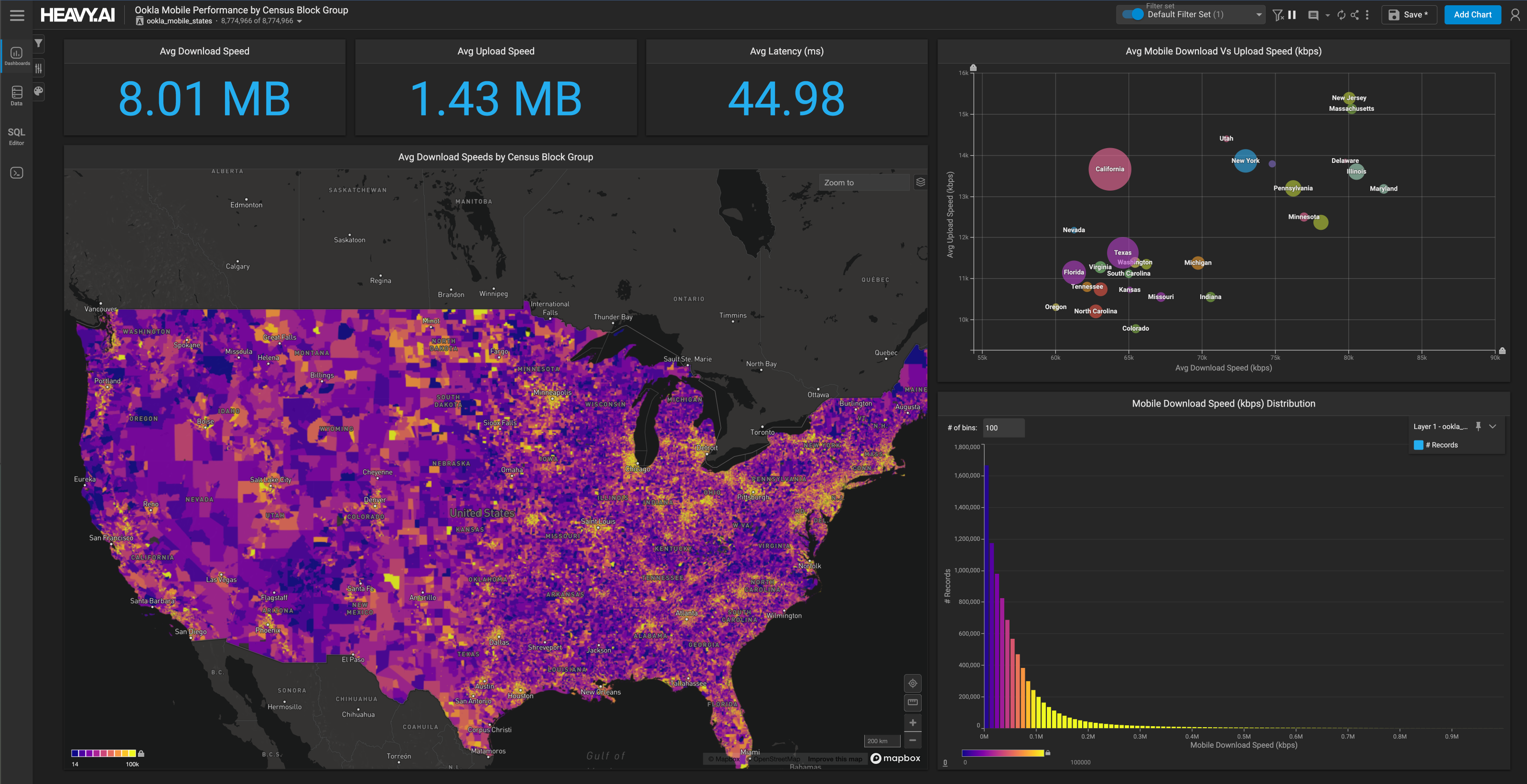

HeavyDB also supports zero-copy rendering of large geospatial data in the system with the HeavyRender module, allowing instant visualization of billions of geospatial features (including polygons) in a way that traditional BI and GIS engines cannot.

Fast, Scalable Data Rendering

Generate interactive server-side visualizations of data in its full granularity.

Learn More

Fast, Scalable Data Rendering

The HeavyRender module in HeavyDB uses the system’s GPUs to interactively render massive datasets. Driven by the widely adopted and open Vega visualization grammar, client applications (including but not limited to HeavyImmerse) can generate near-instant pointmaps, lineups, heat maps, choropleths, scatterplots, and other visualizations of SQL query results. By zero-copy rendering the data directly on GPU, even billions of datapoints can be visualized fully interactively supporting immersive, effortless exploration of granular data at scale.

Parallel Query Execution

Takes advantage of all the hardware of your system (both GPUs and CPUs) to execute queries with massive parallelism.

Learn More

Parallel Query Execution

A key factor behind the unrivaled performance of the HeavyDB SQL engine is how it uses all hardware resources for massively parallel execution of queries. Not only does it take advantage of the tens thousands of cores across multiple GPUs in a server, but it can also leverage the multiple cores and vector execution units of CPUs. When combined with optimized query compilation, HeavyDB yields performance often hundreds of times faster than CPU-based data warehouses, without needing to index, down sample, or pre-aggregate data.

Advanced Memory Management

Designed to keep frequently-accessed data in GPU memory for maximum performance.

Learn More

Advanced Memory Management

HeavyDB delivers unmatched performance by keeping hot data in GPU memory for lightning fast analytics performance. Unlike other GPU databases that store data in CPU memory and transfer it to GPU at query time, HeavyDB minimizes transfer delays by caching recent data in GPU High Bandwidth Memory (HBM), offering up to 100x the bandwidth of CPU DRAM.

Just-In-Time Query Compilation

Compilation framework built on LLVM generates custom, optimized code for each query.

Learn More

Just-In-Time Query Compilation

HeavyDB is built around an optimized just-in-time (JIT) compilation framework built on LLVM to yield the best possible performance. By pre-generating compiled code for the query, HeavyDB avoid many of the memory bandwidth and cache inefficiencies of traditional approaches that rely on interpreters for query execution.

To ensure maximum reuse of compiled query plans, the system caches templated code such that queries with the same structure and types can use the same code, even if columns or literals change. This is particularly important for HeavyImmerse usage, which allows users to rapidly cross-filter large datasets by changing filter parameters with clicks, brushes, and zoom interactions.

Open Source

Facilitates Workflows

Provides a framework that facilitates the orchestration of advanced data science and predictive analytics workflows.

Models Included

Leverages four regression models and two clustering models to train, evaluate, and infer with your extensive datasets.

Utilizes HeavyDB

Harness new native SQL and visualization capabilities to execute ML and predictive operations directly within HeavyDB.

.png)