The Waiting is the Hardest Part

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSEWho would have thought that analytics would become the bottleneck in the modern organization?

Products like Tableau, Qlik and others took the enterprise by storm in the years following the great recession - fueled by plummeting storage costs and correspondingly skyrocketing amounts of data.

The convergence of these two forces helped democratize information across organizations the world over and powered productivity enhancements and the knowledge economy as a whole. Business Intelligence became an industry unto itself.

Then something happened. All that momentum dissipated. The engine locked up.

Why?

The source of that growth, great software and inexpensive storage solutions, collapsed under their own weight.

The amount of data available continued to grow exponentially and if you believe in some of the IoT analytics projections is only going to accelerate. On the other hand, CPU processing power, responsible for driving the analytic capabilities around big data, has stalled out.

And so we began to wait. Seconds at first. Then minutes. Occasionally overnight.

What were we waiting for?

Queries to complete.

The answers to our questions to come back from overtaxed server farms using legacy CPU technology. The more data the query targets, the longer the wait, and as we have just noted, we have more data to query every minute.

So we wait longer.

This is fundamentally antithetical to our goals as technologists but it is the problem that enterprises face today. We are wasting the time of our most valuable analytical assets from data scientists to the analysts who are closest to the customer. The waiting, while painful, is also expensive, running to the millions of dollars per year in lost productivity for even medium sized organizations.

And so what’s the response?

They take data off the table. A 100 million rows here, a couple dozen columns there, another 200 million rows over there. Make it manageable. Keep it such that I can respond in a timely manner or I don’t have to go back to IT or Engineering for the third time this week.

This subset should be good enough right?

Not really. By artificially reducing the amount of data we look at we introduce risk, bias and other suboptimal outcomes that can lead to bad decision making. This has other financial implications that enterprises are just starting to quantify.

Something needs to balance the equation between exponential data growth and sluggish CPU processing growth.

That something is the Graphics Processing Unit (GPU).

Originally developed for video games, enterprising computer scientists realized that the term commodity had a whole new meaning when it came to GPUs. Whereas “commodity” CPUs had four relatively sophisticated processing cores, GPUs boasted thousands.

That is right. Thousands.

GPUs were designed to do mathematical calculations quickly to render polygons onto a screen. A lot of polygons can create some hyper-realistic images. The kind that has made the video game industry into a $100B behemoth that is about to get its rockstar on with the arrival of big time e-sports.

Those same cores can solve different types of problems - up to 1000x times faster than their CPU cousins - if you know how to parallelize your code.

With the advent of CUDA from Nvidia and OpenCL parallelizing your code became more approachable. That coupled with the even more powerful GPUs and you had what you needed to balance the equation.

While there is still a considerable amount of technical acumen required to optimize code for GPUs (see our posts on LLVM and Backend Rendering) - companies like ours are pioneering the work in this space.

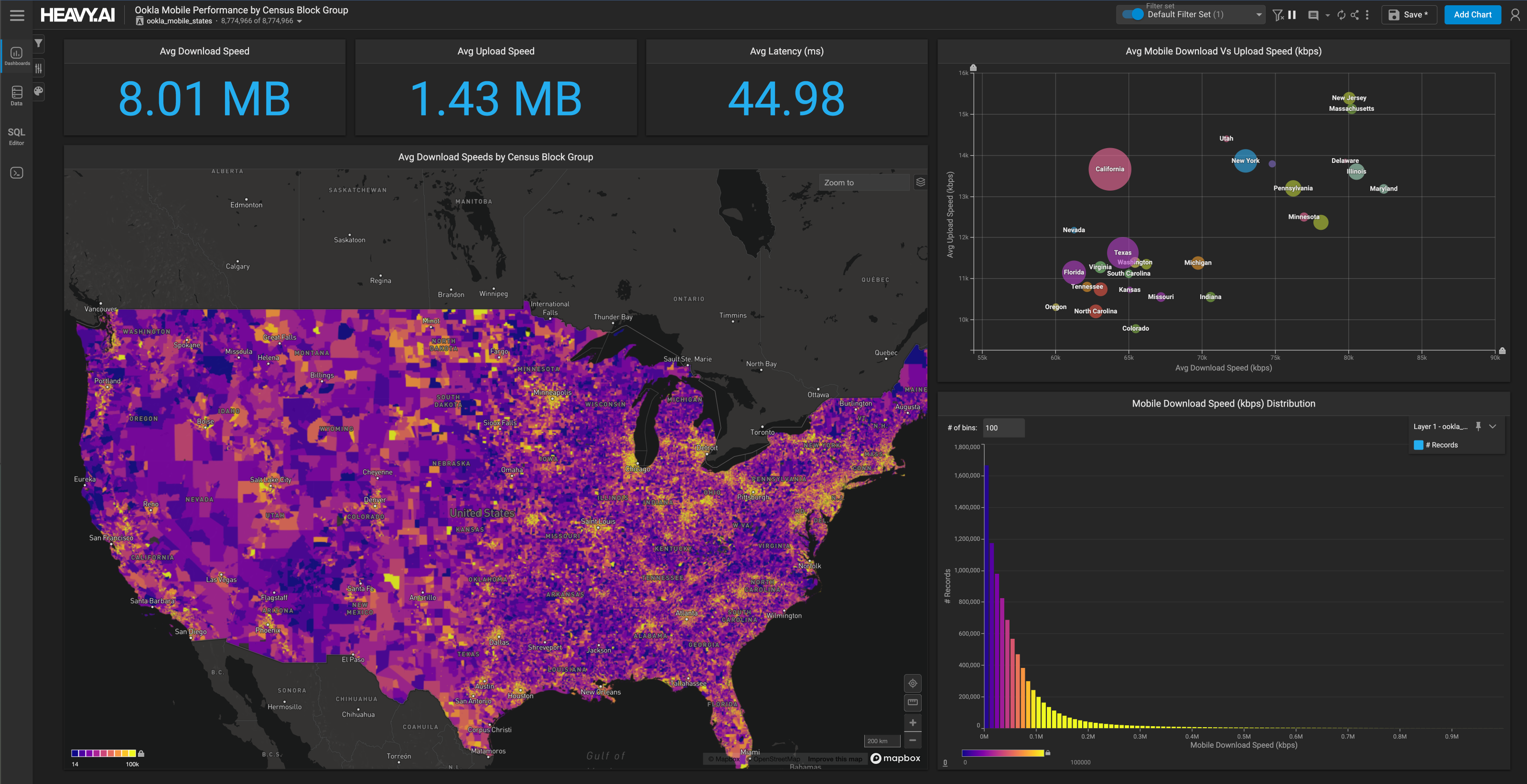

You don’t have to compromise anymore. You don’t have to choose between waiting or downsampling.

Craft your question and let it fly. Oh yeah, by using the native graphics pipeline on the GPU, render it beautifully and instantaneously.

Let’s put an end to the costs, both direct and indirect, associated with waiting on our data. The data won’t stop growing, the challenge needs to turn to identifying solutions that scale with it.

If you want to learn more, drop us a line at info@mapd.com and we can get you setup with a personalized demo.