Predict and Adapt the Future of Your Network with Multiple Data Sources

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSENOTE: OMNISCI IS NOW HEAVY.AI

With billions in revenue at stake, smart telecom providers are retaining customers using real-time insights to improve network performance.

Big Data, Big Expectations

The telecom industry approaches market saturation, forcing providers to compete harder than ever and to pay closer attention to metrics such as customer acquisition costs (CAC), average revenue per user (ARPU), and customer churn. The smartest providers understand the tight relationship between customer satisfaction and their massive, and growing, stockpiles of data.

In the webcast, Predict and Change the Future of Your Network with Multiple Data Sources, Fraser Pajak, President of Fraser Pajak Consulting, presented the results of a survey designed to study the causes of customer churn. Studies of network operations and quality often rely on objective measurements of network performance. With a goal of learning what drives churn in highly competitive markets, researchers adopted a new approach, looking at the subjective data to measure the effect of customer perception of network quality. The study surveyed nearly 7,000 people and found that fewer than 10 measures drive 80% of customer loyalty behavior.

Bottom line: network issues have a far greater negative potential impact than the positive potential of good network performance.

Finding Switchers, Making Saves

The study found that fully 10% of people were in one of two at-risk categories, those who definitely plan to switch, and those who want to switch, but see it as too much effort. The financial impact of those cohorts amounts to billions in revenue.

Ultimately, the challenge of minimizing customer churn is a big data problem. The data exists, but carriers struggle to harness its benefits. The ability to visualize and analyze large datasets has not kept up with rampant data accumulation. The overhead of pre-processing and data preparation often impedes real-time data visualizations and limits the number of hypotheses that can be validated. Data and workflows are siloed across the organization, so related data sets can’t easily be combined to gain valuable insights.

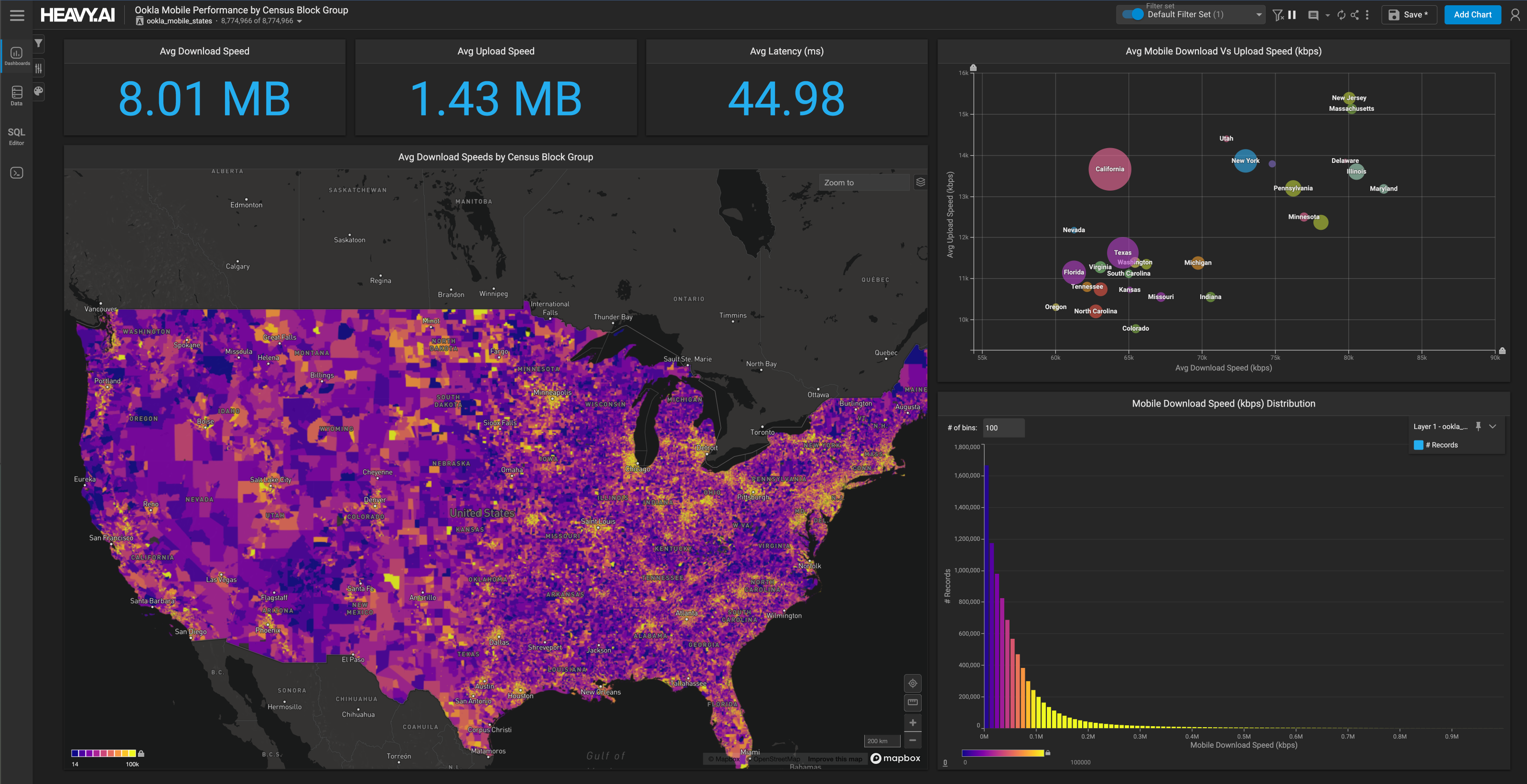

Fortunately, there are new technologies that can handle billions of rows of data, joined across multiple datasets, with millisecond filtering and visualization times. OmniSci is in the vanguard of this movement toward next-generation massively scaled, no-code visualization, helping to transform telecom providers’ network and customer data into an asset instead of a barrier.

OmniSci’s mission is to make analytics instant, powerful, and effortless for everyone. Combining 3-Tier memory caching (identifying hot, warm, and cold data); distributed query compilation; and GPU-powered rendering, OmniSci Immerse occupies a critical juncture between siloed data sources and the AI and machine learning tools. Data sources may be in locations as varied as geospatial data, data from cloud applications, databases, in-office applications on the local network, and more. OmniSci ingests the data from these sources, exposing it to the Vega Rendering Engine, which can work with AI applications to further identify patterns. The results are displayed through the real-time interactive Immerse Visualization System.

Hong Kong Insights

To demonstrate OmniSci’s capabilities, Eric Kontargyris, Director of Sales Engineering at OmniSci, analyzed data sources from a highly fluid and unpredictable event—the Hong Kong protests of January 2020. Few carriers will face such a sudden concentration of customers who are actively using the network, so this provides a good use case for how carriers can respond. A bad experience could cause customers to churn.

The scenario relies on four disparate data streams: tower data; crowd-sourced data from users’ handsets; social media from the crowds; and synthesized core network data.

Data was gathered from nearly 200,000 devices, 12,000 towers, and 2,000 cell site routers, and datasets ranged from 50,000 rows to 1.4 billion rows. The data sets were overlaid without having to perform any join operations. The sampling intervals across datasets also varied. For example, tower data was reported at 15-minute intervals, crowd-sourced data was intermittent, coordinate data was reported every 30 seconds, and social network data was reported as it was generated.

With a volatile protest, cell data usage is likely to spike unpredictably as events occur, and the focus of the analysis is on network data throughput and latency. As the data is overlaid on the same chart over time, spikes in latency quickly appear. The map shows latency hot-spots over time, and enables zooming in to focus on the sampled devices in the high-latency area. Kontargyris drilled into one of these latency spikes and navigated to the worst-affected area on the map, revealing the upload and download behavior and individual towers and routers contributing to the latency.

The tower data tells only part of the story, and too late to prevent the performance issues. Latency jumps from 36 milliseconds to 6.5 seconds. Data uploads spike higher than the download rate, suggesting users uploading photos or video of an event unfolding. Social media data shows tweets complaining of poor service and difficulty sharing media. Customers are not only dissatisfied; they’re disgruntled and complaining openly over social media. The data suggests that one router has failed, affecting multiple towers.

The Biggest Picture Possible

With this level of analysis, telecom providers can not only watch an event unfold, they can also monitor the available data sources, such as routers, CPU, memory, and temperature. Using this data, they can predict equipment failures and proactively replace the failing equipment before it costs them a slice of their customers and reputation. Artificial intelligence and machine learning can plug into this analysis to provide alerts and actionable data.

OmniSci Immerse proves next-generation user experience for data and insights, enabling telecom providers to visualize and analyze multiple data sources to recognize failure patterns and take timely action, empowering them to predict and prevent the network issues that lead to customer churn and lost revenue.

Learn more

- OmniSci Telecom Industry Use Cases

- Solution Brief: Accelerated Analytics and Data Science for the Telecom Industry

- Download OmniSci Free, a full-featured version available for use at no cost

View the full demo here: