Overcoming Limitations of GIS: How the Public Sector Is Looking to Converged Analytics to See Further

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSENOTE: OMNISCI IS NOW HEAVY.AI

Federal and civilian agencies in the public sector are grappling with complex scenario planning and response -- from mission-critical situations to vaccine delivery logistics and wildfire control to population growth. They need a way to glimpse into the future, to predict what might happen so they can plan and prepare. Increasingly their approach relies on large, fast-moving spatio-temporal datasets.

Public sector agencies often share a similar goal: situational awareness and a common operational picture, built from just-in-time spatial data drawn from a variety of sources such as satellites and sensors. The irony is that the lack of data collected is not the problem; the problem is usually that there is too much data to see holistically and generate value.

As organizations in the public sector try to build that common operational picture, they run up against the challenges of the current generation of geospatial intelligence technology, in particular, the limitations of geographic information system (GIS) platforms to explore massive dataset. The majority of those GIS platforms are CPU-based, which at scale imposes speed and throughput limitations of GIS mapping that constrain their ability to interactively interrogate it.

Massive infrastructure underpins geospatial intelligence, supporting countless use cases - census analysis, flood prevention, shipping control. The surface structure entails many different data management systems, with tools running on Oracle Spatial and Graph, SQL Server or PostgreSQL. Each of these run into fundamental constraints in processing given the time consuming volume of data they must analyze. There’s no way to explore it on the fly, which is a problem in scenario planning and for rapid response to disasters.

The public sector has also broadly adopted big data infrastructure, in particular Spark and Hadoop, along with many different data formats and repositories that they want to query against. Many routines are built around these datasets, making the pipeline extremely difficult to accelerate. Today, none of this big data infrastructure is as performant as many agencies would like.

These limitations of GIS analysis have a direct impact on the public sector’s ability to understand and respond to a dynamic array of threats and opportunities.

Accelerating analytics and operationalizing machine learning

Based in San Francisco, OmniSci pioneered the use of NVIDIA GPUs to interactively query and visualize massive datasets in milliseconds. They foresaw the need for a step-change in analytics to harness the massive parallelism of GPUs given the exponential growth of data, especially geospatial data. OmniSci works closely with NVIDIA, which was an early investor in the company. Today, OmniSci is used in multiple government agencies and is backed by In-Q-Tel.

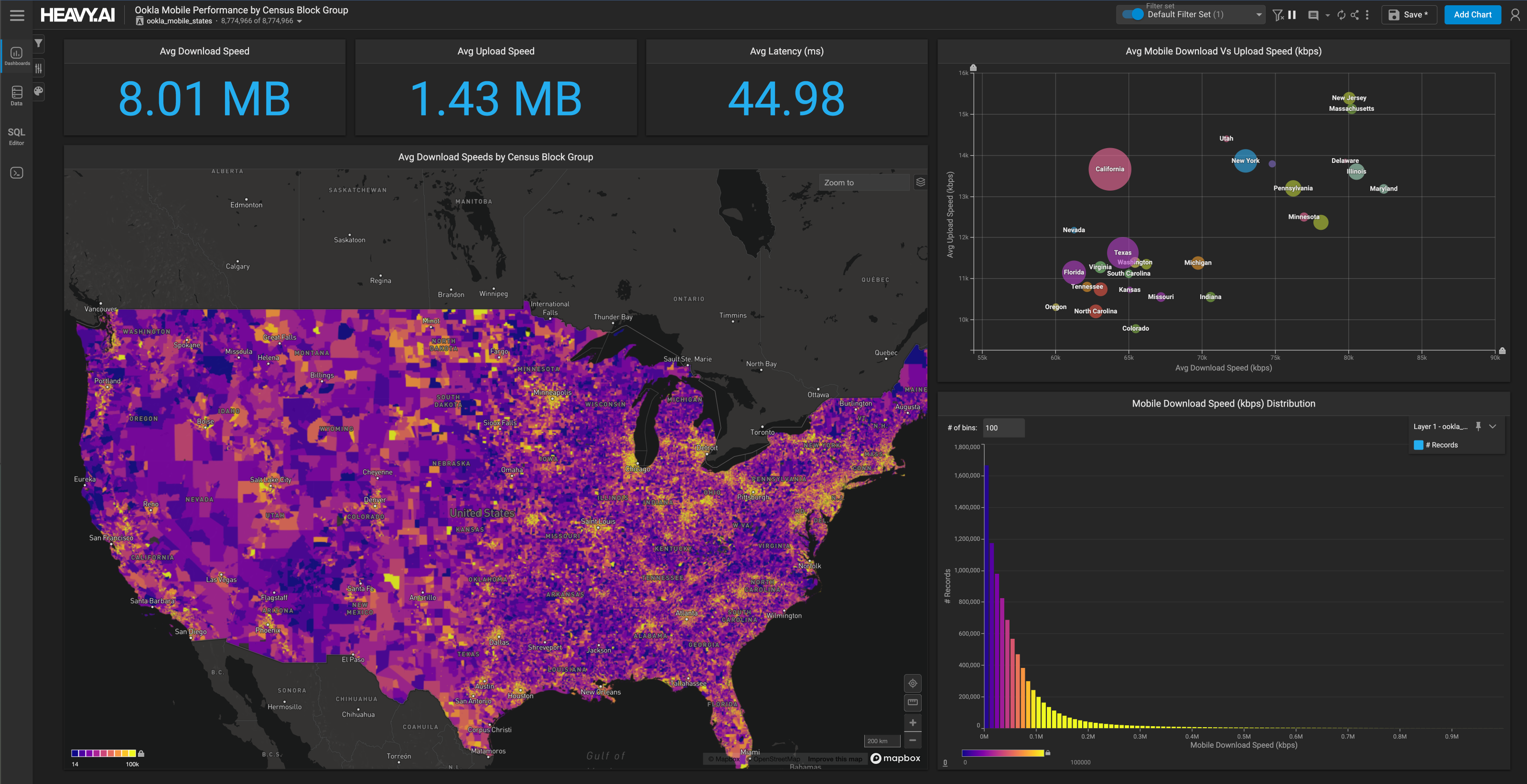

OmniSci provides a converged analytics platform that combines a lightning-fast SQL engine with real-time rendering, supporting up to tens of billion records at a time with native geospatial functions. Running on standard GPU and CPU architectures, OmniSci queries on NVIDIA-Certified Systems run at least 100X faster than the fastest CPU-only based visualization and analytics tools. With an advanced memory management system, OmniSci is built from the ground up to optimize the entire system’s performance specifically for visualization and run analytics.

All government agencies are trying to extract more knowledge from datasets, and they are looking for tools that help them explore data. OmniSci is especially useful for agencies with data that is VAST - Volume (tens of millions to hundreds of billions of rows), Agile (the need to interact and explore the data to make decisions), and Spatio-Temporal (the data includes location and time features).

There are two main users - data scientists looking to build and test ML models and data analysts looking for insights. They face several new challenges relative to those working with conventional approaches such as statistical analysis. Machine learning algorithms require orders of magnitude more data in order to generate reliable models, and they can reach erroneous conclusions if trained on biased or unrepresentative data. Furthermore, the rules used by a model to reach a conclusion can themselves be complex, and require additional work to explain before being suitable to support uses such as policymaking

Effective machine learning relies on data scientists to interactively explore datasets, formulate hypotheses, and then to validate those hypotheses against the data. This ‘human-in-the-loop’ analysis provides two benefits. First, it enables analysts to discover and engineer features and to find meaningful patterns. Those findings can be quickly operationalized and scaled as machine learning algorithms. Second, it provides ‘explainable’ machine learning algorithms - looking inside the black box - which has the potential to expose bias while ensuring that data is representative.

For data analysts, COVID-19 response provides an excellent example of the public sector’s need for big data agility. As understanding of the pandemic evolved, the government’s response to COVID-19 needed to incorporate positivity rates, testing logistics, contact tracing, and vaccine delivery. All of these create VAST GIS data - high volume, agile, and geographically referenced. Few, if any, analytics systems or GIS technologies have proven scalable enough to keep pace.

Use cases: How the public sector uses OmniSci to overcome limitations of GIS

OmniSci has provided a variety of solutions to improve the pandemic response, from social distancing compliance to contact tracing within specific, vulnerable arenas, such as meat-packing plants.

In another public sector case, OmniSci is able to build a common operational picture of navigation on the Mississippi River. Deciding where and when to dredge is a question of critical importance, as siltation and floods regularly shift and obstruct shipping channels. By combining AIS ship transponder data with topographic bathymetry, OmniSci generates real-time insights that show areas that are unsafe for navigation or may require attention. See OmniSci’s interactive Shipping Traffic Demo here.

In Flint, Michigan, OmniSci’s machine-learning platform is being used to identify water pipes which should be inspected for lead pollution. This not only has a huge impact on population health, but also provides efficiency gains in mitigating the issue for service crews.

OmniSci and NVIDIA have helped public sector organizations tackle big data challenges including fleet management, logistics, and even flood prevention. Meanwhile, in healthcare, OmniSci has helped to counter fraud and abuse in Medicare, as well as to track public health phenomena like the opioid crisis.

As the availability of data increases, so do the public’s expectations of government to make the best use of it. There’s a need to make a step-change in the ability to see the big picture and to respond. Together, OmniSci and NVIDIA are helping federal intelligence and civilian agencies harness the power of NVIDIA-Certified Systems featuring NVIDIA GPUs to overcome traditional limitations of GIS and make a difference in public life.

OmniSci is proud to be a Platinum Sponsor at NVIDIA GTC21, running April 12 - 16! Join the OmniSci team and AI innovators, technologists and creatives for five days of sessions, training, and networking with industry leaders. Register for free here.

To learn more about how OmniSci helps federal, state, and local governments handle their largest datasets, visit OmniSci’s public sector page here.

Try OmniSci for yourself today, download OmniSci Free, a full-featured version available for use at no cost.