OmniSci 💙 Arrow

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSENOTE: OMNISCI IS NOW HEAVY.AI

Led by Wes McKinney, Apache Arrow started off in 2016 as an efficient columnar data format compatible with a wide range of languages and toolkits in the data science community. The original goal was to simplify data exchange between different components of the data science ecosystem by providing a standard high-performance memory layout. Since then, Arrow has reached version 2.0.0 and experienced wide adoption in the data science community. A rich ecosystem of supporting tools has also emerged - both within the project itself, and the broader landscape of modern analytics platforms that support or integrate Arrow.

In 2017, we released support for Arrow in our Python toolkit (with contributions from Wes himself). Early on, our focus was to provide results from our query engine in Apache Arrow format. Shortly thereafter, we built on our Arrow result set to support output to the cudf dataframe within Nvidia’s Rapids toolkit.

We always knew there was potential for improving the performance and scalability of this output path, and making it more useful overall. Last year, we put a lot of effort into optimizing performance and scalability by reducing overheads while writing out Arrow result sets. Still, the only supported mode of using Arrow with OmniSci was via shared memory - i.e the consuming process would need to be on the same machine as the OmniSci query engine.

With OmniSci 5.5, we’ve further improved performance but more importantly, we are now introducing support for Arrow over the wire, which is now the fastest way to receive query results on a remote machine. We’ll discuss both briefly below.

Improving Arrow Performance

In OmniSci 5.5, we are able to return 100M records over 7x faster by delivering results as an Arrow buffer over our existing result set format.

Arrow on Remote Machines

Before OmniSci 5.5, we provided clients access to Arrow via shared memory. When running the client on the same machine as OmniSciDB this makes for a highly performant transport for the Arrow buffer, allowing the client to read the data directly with little overhead.

For many OmniSciDB users, the data science toolkit and database are run on separate machines. In these cases, the only method to receive query results was via our Thrift-based ResultSet. The ResultSet gets sent by Thrift to the client and then deserialized into client objects such as TRowSet, TColumn.

We’re now offering an alternative to our ResultSet format: Arrow over the wire. This allows for sending the Arrow buffer over the thrift interface to remote clients, and enables using the buffer with pandas or other Arrow-capable projects with no additional work.

Here are some promising initial results.

You can use Arrow over the wire in both our python and javascript APIs today.

Here, we have specified that we want to receive the results of a query in a data frame, and over the wire, rather than the existing shared memory approach. Note that shared memory can still be utilized by setting transport_method=TArrowTransport.SHARED_MEMORY.

You can also use this capability now within MapDConnector, our Javascript API. Here is a simple example of using the queryDFAsync call to get results back in Arrow format.

OmniSciDB and deck.gl

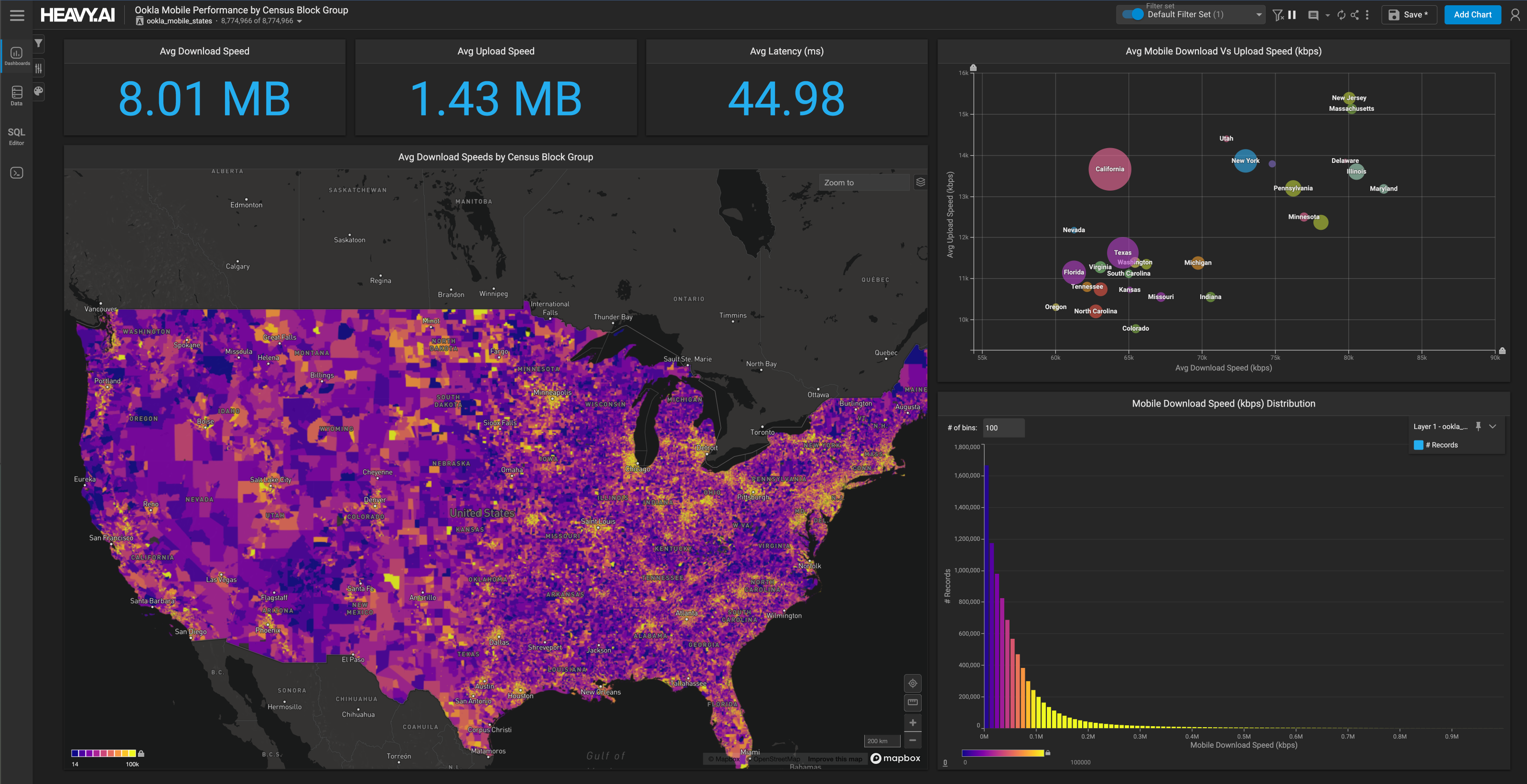

To show the capability of Arrow over the wire, we’ve integrated with deck.gl to use the Arrow buffer to render OmniSci results.

Here, our CEO Todd Mostak shows interactive visualization of 8M records of ADS-B flight data. Query results are served from OmniSciDB 5.5 and visualized with deck.gl using our mapd-connector with newly introduced Arrow wire capabilities.

What’s next for OmniSci and Arrow?

As we look ahead to 2021 (in more ways than one!), there are a number of major product goals for OmniSci where Arrow will play an increasingly critical part.

First, we are looking at leveraging our Arrow ingest path to build out efficient, performant OmniSci connectors to external systems such as Snowflake - this is after all one of the major advantages of wider Arrow adoption within the analytic ecosystem.

Next, we plan to explore the use of Arrow Flight as a transport, particularly in tandem with websockets, to ship large result sets efficiently over the wire to our Javascript API (MapDConnector).

Incorporating Arrow-based results into our Javascript API opens up several areas of interesting potential usage and ecosystem integration. Of recent, particular interest to us is the work being done on Arquero by Jeff Heer’s group at the University of Washington which provides an Javascript analytics API similar to Pandas that can operate natively on Arrow buffers. Specifically, we’re looking at how the Arquero team’s query serialization can allow them to delegate queries to SQL. Combined with our Arrow-based results, this could potentially provide an equivalent of Ibis in Javascript.

Also, as important client-side libraries like deck.gl start to support Arrow natively, it provides us a natural path to efficiently scale rich data visualization to large datasets - as illustrated by the example above.

Our long-time collaborator Dominik Moritz is already putting our Arrow result set to good use! Here’s an example Observable notebook showing how you can create a Vega-Lite chart from Arrow-based results.

Dom’s also updating the OmniSciDB backend for Falcon, to illustrate what is possible with crossfiltered exploration of large datasets powered by Arrow. In many ways, Falcon’s paradigm fits well with the motivations of our Arrow-over-the-wire support. Unlike Immerse, where we send multiple queries to OmniSciDB on a user interaction event (one per chart), Falcon materializes a dimensional cube that is then split into linked views using Vega-Lite. This allows interactive exploration of large datasets when paired with OmniSciDB.

Having Arrow-based results over the wire from OmniSciDB raises the ceiling on the size of these datasets. As we continue to optimize this capability, we hope to provide more exciting ways of combining purely local paradigms like Arquero, deck.gl and Falcon with high-performance analytic backends like OmniSciDB.

We’re thrilled to continue our quest to integrate Apache Arrow deeply into OmniSci and become part of the modern data science and analytics ecosystem. Please keep an eye out for additional blog posts on how to use the Arrow-over-wire capability, and let us know of your experience on our community forums. Also, if you’re interested in contributing or working on this, please reach out, we’re hiring!