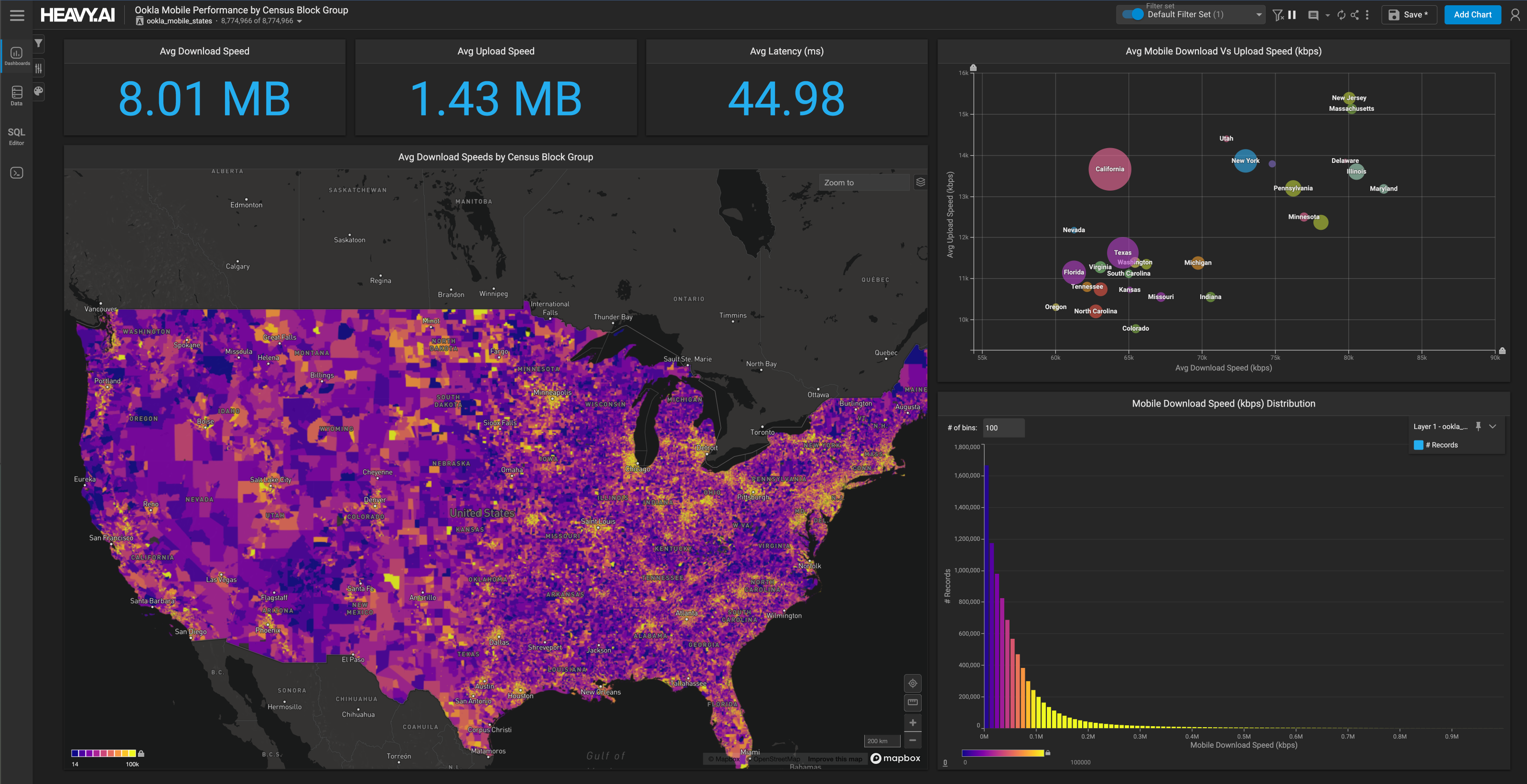

Making Geospatial Joins Interactive at Scale

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSENOTE: OMNISCI IS NOW HEAVY.AI

SQL Joins are the quintessential database operation. A join allows the user to slice and dice multiple disparate data sets, e.g., combining transaction data with product SKUs or customer information with purchase history. Similar results could be created without joining but require wide flat tables and possibly heavy pre and post-processing. Properly executed, joins provide flexibility, increase performance and allow for a wide range of data exploration.

However, joins are, by definition, expensive. A join combines the columns from one or more tables into a new table using one or more predicates to match rows. It is instructive for programmers to think of a JOIN operation semantically equivalent to a for loop. Given the following query, which combines the records from table t1 and table t2 using the predicate column a in table t1 equal to column b in table t2, we can write a pseudocode for loop as follows:

Such an operation has complexity N * M, where N is the number of records in t1 and M is the number of records in t2. For small tables, this is trivial (particularly on GPUs). Nevertheless, for large tables or complicated join predicates, we quickly exceed the computational ability of even the most powerful single server systems. Join optimization is a well-studied area of database research, and many well-known approaches mitigate the “N squared” problem detailed above. One common approach is to leverage a hash table data structure to reduce the number of match tests required between the two tables. This method builds a hash table over one of the tables, and the loop is augmented with a lookup into the hash table to obtain the potential set of matching rows:

While the loop structure remains similar to our first example, the hash table can significantly reduce the number of searches in the second loop. In the best case, the hash table can provide both constant time lookup and strict equality guarantees, reducing the loop to:

OmniSciDB supports hash joins with on-the-fly construction of hash tables on both CPU and GPU. Multiple hash functions are supported depending on the complexity of the join’s equality predicate and/or size. Today, we will consider accelerating geospatial join queries using hash joins.

We define a geospatial join query as one which uses a geospatial operator as the join predicate. A user might have a table of geocoded tweets with longitude and latitude and want to determine what zipcode the tweet falls in -- possibly coloring tweets by zipcode on a rendered map in Immerse. Such a query might look like the following:

(note that left join preserves tweets with no match in the zipcodes datasets, meaning a tweet will be included with a “NULL” color if it has no corresponding zip code, rather than excluded)

To determine whether a tweet is inside a given zipcode, we need to check the tweet location point against each zipcode polygon exhaustively. Most algorithms require a check of each vertex of the zipcode polygon (there are a few methods for doing this; we use the winding method) against each point. Assuming we have 1 million tweets and 33,144 zipcodes, comparing each point to each polygon would result in 33 billion comparisons. Since the median zipcode has 166 vertices, we add more zeros for 5,501,904,000,000 total comparisons (5.5 trillion operations). This does not account for the operations required to load data, check boundary conditions, and more, so we can consider it a lower bound. The latest Ampere GPUs have nearly ten teraflops, which executes the above operation in about 500ms, but if we increase our tweets table to 100M, we see time to compute increase to 6 minutes. Most OmniSci customers use data in the range of 500M to the low billions, and most queries in OmniSci run in milliseconds. So, we need to employ some optimization to make geojoins interactive.

While it may not be readily apparent, we can apply the same hash table technique discussed above to the zipcodes table. We require two conditions; first, we need a suitable hash function to build our hash table. Moreover, we need an expression to hash over that has the following property: ST_Contains is true if the hash join expression is also true. We introduce a new operator called Overlaps, which satisfies this property.

We define Overlaps(b,a) or a overlaps b to be true if the bounding box of object a overlaps the bounding box of object b. Note that this operator may be better named ApproxOverlaps; we will stick with Overlaps for the rest of this article for brevity. For a point, we consider the bounding box to collapse to the point. So, if a is a point and b is another geospatial object, Overlaps returns true if point a is inside the bounding box surrounding the object b. Now we will prove ST_Contains is true if and only if Overlaps is true. Assume we have a point a and a geospatial object b and a bounding box b_box. Assume ST_Contains(b,a) is true (that is, the geospatial object b contains the point a) and assume Overlaps(b,a) is false (that is, a does not overlap b). The bounding box b_box contains b by definition. So, if b contains a, then a must be inside the bounding box for b. Therefore a overlaps b. However, we assumed Overlaps(b,a) was false, so we have a contradiction and, if ST_Contains(b,a) is true, then Overlaps(b,a) must also be true.

We can use this property to replace the expression ST_Contains(b,a) in the query with the binary predicate Overlaps(b,a) AND ST_Contains(b,a). We will then build a hash table over the expression Overlaps(b,a) and use the hash table to reduce the number of comparisons in the join. To do that, we need a hash function. Consider the bounding boxes of the geospatial objects b. If we take the union of all bounding boxes, make it a rectangle, and then subdivide this region into individual bins, each of the same size, we can create a function that spatially maps any point a into a bin. One such function assigns an integer identifier to each bin, computed by dividing the point coordinates by the corresponding bin dimension and taking the floor. Space-filling curves are another option. We opted for the simple hash function because our input data is not sorted or ordered, and queries typically target a single bucket, not a range of buckets. The memory locality properties of space-filling curves are an area of future exploration. To build a hash table for this function, we take the bounding box for each polygon, determine the bin(s) the bounding box overlaps, and write an identifier for the polygon into each bin.

Putting it all together, we combine the new expression Overlaps(b,a) AND ST_Contains(b,a) with the hash table over Overlaps(b,a). For a given point a, we first check the hash table to see which bin the point belongs to by applying the hash function to the point. We read the list of polygon IDs from the bin. This operation computes Overlaps for the point a and all polygons b (note that the condition is a bit weaker, in that a point could be in the same bin as a polygon but the two could not overlap, but it is sufficient for our purposes as any polygon not in the bin cannot possibly contain the point -- see figure 1 ). This process constrains the total number of comparisons for ST_Contains to only polygons which might contain the given point. If the bins are low occupancy, we can significantly reduce the number of comparisons required for each point. The following pseudocode illustrates this process:

We started with an expression, ST_Contains(b,a), and rewrote the expression to an equivalent expression, Overlaps(b,a) AND ST_Contains(b,a). We can then use the hash join framework with an appropriate hash function and hash table to constrain the search space of the expression and improve performance. So, how do we choose appropriate bin sizes when building the hash table?

Hash table sizing is a tradeoff between the size of the hash table and the number of records in each bin. The fewer records in each bin, the less work we have to do per bin; this means fewer expensive ST_Contains operations in our example above. However, as bins get smaller, more bins must cover the same area, increasing the time to build the hash table and its size. Our algorithm (see figure 2) searches for a hash table with low bin occupancy, capped to a maximum size. We parameterize the search over the "bin threshold," the minimum bin size we will allow in the hash table. The tuner initializes the bin size by iterating over all the bounding boxes we want to add to our hash table and determine the size of the bounding box in each dimension; if that bounding box size is less than the current bin threshold but greater than the current is chosen bin size, we take that as the new bin size. By lowering the bin size threshold, we can systematically search across both hash table size and bin occupancy.

We will walk through one step of the tuning process in detail. Each step computes a set of one-dimensional bin sizes (the framework currently only supports two-dimensional bins but is built to support arbitrary dimensionality). We can calculate the total hash table size and a metric for average bin occupancy from the computed bin sizes. We use the calculated size and occupancy to decide whether to continue tuning. First, we look to see if the hash table is too large. If the hash table has grown too big, we use the previously determined threshold value/bin sizes. However, if no previous value exists, we have likely overshot the bin size and need to pick a more significant threshold. In this case, the algorithm reverses itself and picks larger bins until we have a hash table under the size threshold. The remaining two cases are straightforward. Keys per bin is a measure of bin occupancy, i.e., how many bounding boxes overlap a given bin (in aggregate). If the keys per bin are increasing, or if we have reached the keys per bin threshold, we terminate the algorithm, assuming that the size costs of a larger hash table will outweigh any further occupancy gains, and use the values from the previous iteration.

We have run several experiments to demonstrate the algorithm search process and tradeoff between bin occupancy and hash table size. The experiments below use a left-hand side table with geotagged records from Twitter's public API (the "tweets" table). We used an AMD Threadripper 2950X with dual NVIDIA RTX 2080 GPUs. The tweets were loaded with the standard fragment size (32M rows per fragment), split evenly across both GPUs. No additional optimizations were applied. We used three polygon tables; counties in the United States (3,233 rows with 7,944,863 total vertices across all polygons), zipcodes in the United States (33,144 rows with 52,210,207 total vertices), and census blocks in the United States (220,740 rows with 67,581,813 total vertices. The query was a left join between the tweets table and the relevant polygon table, with the first run excluded to eliminate the initial disk fetch of the data. We used a single count aggregate with no filters in the projection. Data for each experiment are in the figures below.

All experiments consisted of setting a target key per bin parameter and determining the resulting hash table size, build time, and execution time of the query utilizing the hash table. Using these figures, we can understand how the algorithm behaves in various settings and what the tradeoff between hash table size and bin occupancy means for hash table build time and query execution.

Generally, results show that as the hash table gets larger, the build time becomes much more expensive, and the execution time can either moderately decrease, remain the same, or even increase. The US Counties in Tweets figure displays time on the y-axis; we see that the build time oscillates wildly at relatively low keys per bin, left of the red dotted line. We also see corresponding oscillations in the hash table size. Figure 4 (below) plotted the chosen bin thresholds against the same target keys per bin as in Figure 3(b). As one would reasonably expect, the largest hash table corresponds to the smallest bin size. However, occupancy and hash table size do not seem to be correlated at these small bin sizes.

To understand this correlation (or lack of correlation), we return to the tuning algorithm; to make tuning decisions, we must select new bin sizes for each step. Reducing the previous bin size by a fixed step and finding the largest bounding box dimension under the new threshold determines the new bin size per dimension. In this way, the algorithm moves through the different-sized bounding boxes, ensuring that at least one bounding box fits roughly within a bin. In practice, this avoids bin sizes scaled by a fixed size that does not match the underlying geometry (mainly since the units of the underlying geometry can vary by the coordinate system). By combining the bin size chooser with hash table size and keys per bin metrics, we can minimize execution time (by maximizing hash table size) using keys per bin to keep build times in check. Indeed, the default solution is either at or relatively close to optimal in Figure 2.

What happens when the bins get smaller? A slight variation in bin size at small scales results in a dramatic difference in hash table size. The small bin size is typically the result of many steps because the algorithm searches through bin sizes based on existing data and selects smaller bounding boxes at each iteration with a fixed step size. The keys per bin occupancy metric change as we work through bounding boxes of similar sizes. Suddenly, we jump to a much smaller bounding box; this causes the hash table size to increase dramatically and change the occupancy, which terminates the algorithm at the previous iteration (see Figure 4b). Essentially, the bin occupancy metric prevents us from “falling through” bounding boxes to one which is much smaller than the “average” bounding box size in the dataset.

In summary, our experiments show that the occupancy metric helps the tuning algorithm avoid dramatic changes in hash table size due to "slipping down" to the next group of bounding boxes, which may be much smaller than our experimentation group. By combining an experimentally determined minimum occupancy threshold with occupancy trend, we can abort tuning right at the edge of a reasonably large hash table (giving low bin occupancy and good performance) without falling into an unstable region where the hash table size can increase by orders of magnitude or oscillate wildly across similar occupancy metrics. While this algorithm produces strong results, additional optimizations are possible. We want to solve the optimization problem to maximize hash table size for a given occupancy threshold and pick an occupancy threshold that maximizes query execution time without creating an unreasonable build time. Consider this our first attempt at approximating a solution.

Even though we are looking at additional optimizations to increase parallelism and performance of our overlaps join approach, it already has shown to be of immense benefit for bringing big geo-joins to be interactive or near-interactive performance levels. On a machine, with 2 Nvidia RTX 3090 GPUs, overlaps join increased performance over loop join between 200 to 1,200X on various examples, at the most extreme increasing a join of US tweets to US census block groups from 11.3K lookups per second with loop join to 22.38M lookups per second with overlaps join. Finally, we believe there are additional speedups possible. For example, experiments leveraging space-filling curves to order and hash the data suggest the potential to gain an additional 3-4X in performance by increasing spatial locality and lowering execution divergence during the probe phase of the hash join, so stay tuned for more on this front.

Point in polygon joins are just one example application of the techniques described above. The generic OVERLAPS operator applies to various queries, including spatial and temporal queries (or combinations of the two). In general, two broad applications emerge; queries requiring an algorithm to construct the hash table and queries that dictate the construction of the hash table based on the predicate defining the OVERLAPS relationship in the query. We have implemented distance joins between geospatial points as an example of the latter type; if a user requests all points within N units of each other, we can construct the hash table such that a simple search of neighboring bins is always guaranteed to return the points which might be within N units of each other. While we are in the early stages of productionizing these techniques, the point in polygon join is available in OmniSci as of version 5.6 across all product modalities, including desktop, OmniSci Free, and the Enterprise Edition.