HeavyML: Deeper Insights with the Power of Machine Learning

Download HEAVY.AI Free, a full-featured version available for use at no cost.

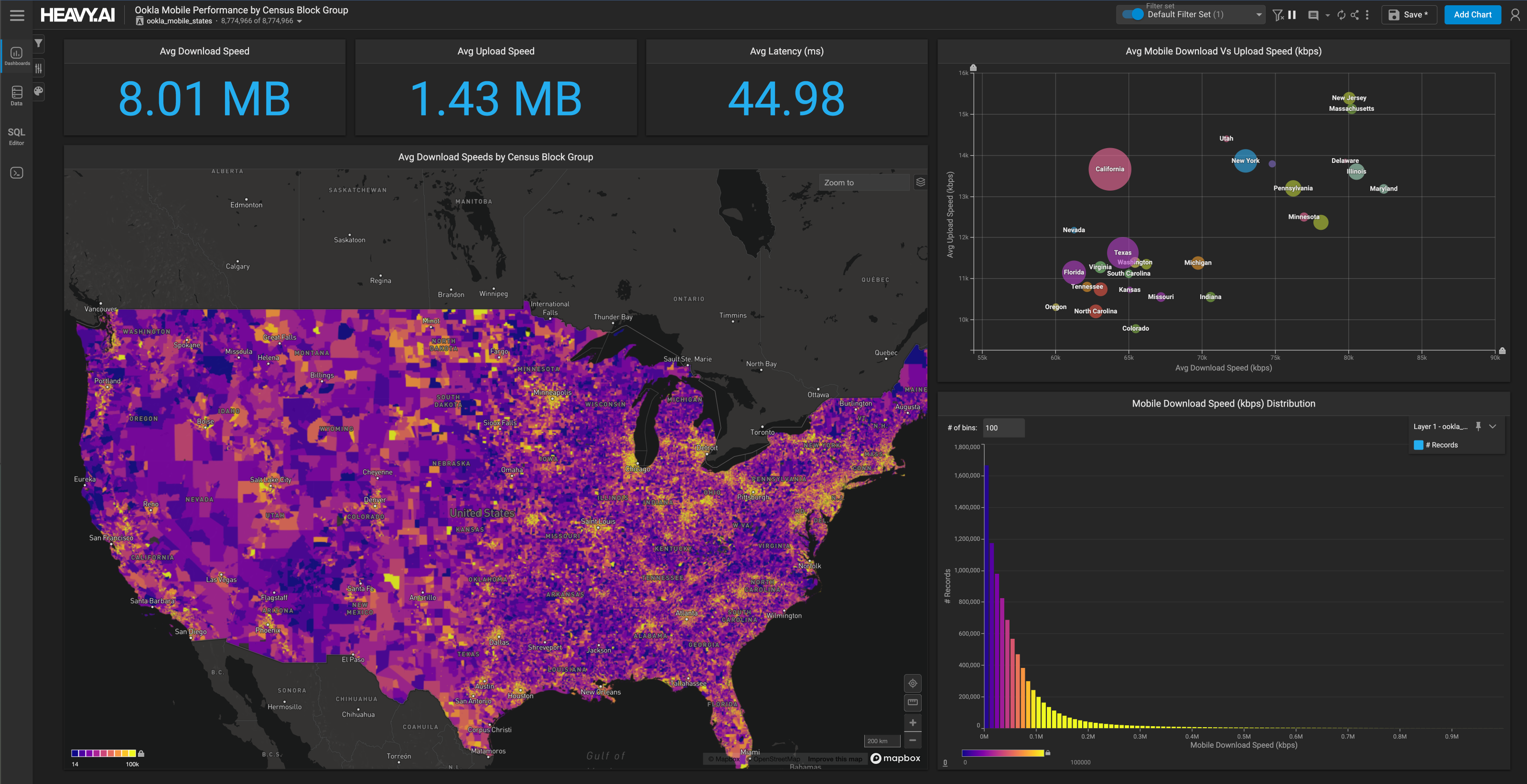

GET FREE LICENSEIn the banner 7.0 release of the HEAVY.AI platform, we are excited to announce the beta availability of machine learning capabilities in-product. These new features allow users to orchestrate advanced data science and predictive analytics workflows directly using our accelerated HeavyDB SQL engine and Heavy Immerse interactive visual analytics frontend, yielding significant performance and usability advantages.

Motivation

The line between the traditionally disparate disciplines of data analytics and data science have become increasingly blurred in recent years, as business focus has shifted from an emphasis on descriptive analytics (what happened) to predictive analytics (what will happen). With this shift, analytics users and consumers who would not typically classify themselves as data scientists want to not only see their data, but to leverage the power of machine learning to do things like find anomalies and predict future trends or infer missing values. However for the most part these more advanced capabilities are still only accessible via advanced, code-first notebook workflows missing in traditional BI and database products, leaving the power of ML out of reach for many users.

At the same time, executing data science and machine learning operations in different platforms from which the data is stored (typically a data warehouse or data lake) can lead to significant performance bottlenecks, particularly as the scale of the data increases. After all, data is heavy, it has gravity, and moving large amounts of it between systems (especially if they are running on different servers) imposes a performance tax.

All of which lead to the question: what if analytics users could tap into the power of ML in the SQL and visualization workflows they use every day, and do so interactively?

Overview of HeavyML

Given that HeavyML is only being released as a beta feature in 7.0, there are still a number of features and capabilities that are missing in this initial release. That said, users will still find they can execute common machine learning workflows with the release of 7.0, orders-of-magnitude more performantly and with greater ease-of-use than possible before.

The initial release of HeavyML offers support for four regression model types: random forest, decision tree, gradient boosted tree, and linear regression, and two clustering model types: KMeans and DBScan. Model creation and training can be done via a simple CREATE MODEL command, while inference for regression models is performed via a new first-class ML_PREDICT operator that can be used anywhere in a SQL query just like any other operator, and via special table functions for the clustering methods. Model training currently is executed in parallel on CPU using the high-performance Intel OneDAL library as a backend, while the ML_PREDICT inference operator is compiled down to model-specific code for both CPU and GPU, yielding world class performance (up to billions of rows per second for simple models).

While deeper integration into Heavy Immerse is planned for future releases, with 7.0 users can easily train a model using the SQL editor and then embed inferences using the ML_PREDICT operator in custom measures. Let’s walk through an end-to-end example of one particular use case for HeavyML: finding anomalously priced real estate. As hopefully the example will make clear, the ease-of-use and speed of leveraging HEAVY.AI’s native SQL capabilities for performing various ELT tasks, Immerse to easily see anomalies and correlations in the data, and then finally HeavyML for inline model training, evaluation, and inference, make for a powerful exploratory platform for feature engineering and model building.

ML for Fun and Profit

Many counties and states release parcel data, but few of these datasets match the quality of the data released by the Florida Geographic Data Library (FGDL) of the University of Florida. Released annually, this dataset provides an overview of the state’s roughly 10.5M residential, commercial, and publicly held land parcels, including tax information for the parcel as well as attributes such as the parcel acreage, use class, and square footage of any structures. It also includes the sales price for any parcels sold for that year.

Let’s start by ingesting the most recently released parcel dataset from FGDL for the year 2021 into HeavyDB. We can do that through the Import section in Immerse if we’ve already downloaded the file, or one can do it directly from SQL via:

This results in the following table schema, accessible in Immerse or via SQL by issuing the following command:

Let’s get a few summary statistics on the table:

Meaning that there are ~10.5 million parcels on the table, 1.84M of which changed hands in 2021.

Let’s see the parcels on a map, which is easy to do in Immerse by making a choropleth chart (the geo measure will automatically be set to geom). Let’s also color by PARUSEDESC to see how the various use classes of the parcels are distributed spatially. One of the strengths of the HEAVY.AI platform is being able to render large numbers of geographies interactively using the GPU-accelerated server-side rendering of Heavy Render.

Here is a zoomed-in view of Miami:

We can see that residential housing constitutes the bulk of the parcel use classes outside of downtown Miami and the very periphery of the city.

Let’s focus on the sales price variable, as there are a number of applications of using ML to be able to predict this value, including:

- Predicting the sales price for missing values, i.e. the ~8.7M parcels that didn’t sell in 2021 and so don’t have a sales price attached

- Predicting the sales price for parcels that are currently on the market

- Determining the main drivers for sales price, which can be useful whether you’re a real estate developer or a county official looking to cultivate land values in your area.

- Finding parcels that are sold for, or are listed for, significantly more or less than the predicted value. In the latter case, parcels listed below their predicted value could be good opportunities for investment or arbitrage.

We can look more closely on the distribution of sales prices by creating a combo chart in Immerse and setting SALEPRC1 as the dimension, with # Records (here, parcels) as the measure. SALEPRC1, according to the provided data dictionary, is “Sale Price (Most Recent Sale)”. There is also a SALEPRC2 variable which contains the price of the second to most recent sale in the year, if any such sale occurred, but given the relative scarcity of multiple sales for a property in a single year, we will be focusing solely on SALEPRC1 for this analysis.

As might be expected, the variable shows strong left-skew, with a few parcels sold for up to $250M, but most significantly less than $1M.

Given that the values for real estate will vary widely by category, let’s drill down on single-family residential parcels by creating another combo chart on PARUSEDEC, and clicking on the bars for ‘SINGLE FAMILY’ and ‘CONDOMINIUMS‘ to cross-filter the sales prices by these categories.

We can apply a filter on the range chart (bottom sub-chart) of the sales price histogram to only look at sales below $2M. One thing you’ll quickly notice is the spike in sale prices near 0, likely representing non-arms-length sales or other data anomalies. For the best results, we’ll want to exclude these “free” properties when we build our ML models.

With this in mind, let’s take our first pass as generating a model to predict sales price for Single-Family and Condominium residential properties. First, we’ll start with the old standard of linear regression, which while not generally possessing the high-predictive power of tree-based models, serves as a good baseline and also adds value due to the explanatory information embedded in the regression coefficients themselves.

As a starting point, let’s try to predict the sales price (SALEPRC1) for single-family residences and condominiums based on a set of “usual suspect” continuous (numeric) predictors, namely the parcel acreage (ACRES), the living area in square feet of structures on the parcel (TOTLVGAREA), the year the structure or structures on the property were built (EFFYRBLT), and the sale year (SALEYR1). In this dataset for 2021, the latter variable only contains the values 2020 and 2021, but this still may provide explanatory value due to the real estate market heating up during the 2020-2021 timeframe, plus it sets us up well if we want to do deeper longitudinal analysis comprising the older annual Florida parcels datasets that FGDL provides.

To build a regression model in HEAVY.AI, we can use the CREATE MODEL statement.

Note the SQL in the SELECT statement can be any valid SQL, with filters, group bys, joins, etc as needed. So to perform a linear regression to predict sales price based on the variables above, filtering out properties that do not have sensible sales prices between $10K and $10M, you can run:

This will predict the value of SALEPRC1 of single-family homes and condos based on the values of the ACRES, TOTLVGAREA, EFFYRBLT, and SALEYR1 variables. Note that any rows where we have nulls for the predicted or predictor variables will be automatically removed from the training set, so you don’t have to worry about cleaning up missing data manually.

As a simple model, this train/fit operation runs very quickly, taking 87ms on my workstation. As you can see, we also provided an option to the CREATE MODEL statement using the WITH clause, here specifying a “hold-out” evaluation or test set comprising 20% of the original data. When an evaluation fraction is specified, we can easily test the performance of the model by running EVALUATE MODEL <model_name>, which returns the R2 score (explaining the proportion of the variance of the predicted variable, here SALEPRC1, that can be explained by the predictor variables. As an aside, if an evaluation fraction is not specified, or if you want to test the performance of the model “out-of-band” on a different dataset, or perhaps a subset of the original data, you can run EVALUATE MODEL <model_name> ON <select_query>, projecting out the predicted variable and predictor variables just as you do in a CREATE MODEL statement. But since we did specify an evaluation set, testing the model’s accuracy on data it was not trained on is as simple as the following:

This means that roughly 20.1% of the variance in sales price can be explained by the variables provided with the linear regression model. This is not great in terms of model fit, as it means nearly 80% of the variance in sales price is unexplained by the model, but remember, we’re just getting started!

For linear regression models, we can access the regression coefficients by running the linear_reg_coefs table function, using the model name as the sole argument. In future releases, an easier to use SHOW MODEL FEATURE DETAILS <model_name> is planned that will provide relevant information for any model type.

As mentioned above, linear regression is nice as the model parameters, i.e. the linear regression coefficients, are readily interpretable by human users. Here, the above regression coefficients suggest that parcel sales price goes up $408 per acre, $69 per living area square foot, $2592 for each year newer the dwelling is, and $92,324 for each year later the property is sold (again, given only 2020 and 2021 exist in this data for the SALEYR1 variable, suggesting the real estate market was rapidly heating up into 2021).

Let’s try adding a few more predictor variables into the mix, this time categorical variables. HeavyML natively supports one-hot encoding of categorical variables (essentially making a virtual column for each attribute of a categorical column, containing either 0 or 1 for absence or presence of the attribute), making it easy to test hypotheses involving categorical predictors. (Technically for better fit it performs dummy encoding as it will not encode the least common attribute, but we won’t delve into that here). Note that compared to a typical Pandas/Scikit-Learn based workflow, HeavyML SQL is designed to make tasks like one-hot-encoding and null handling as effortless as possible.

Here we will add two categorical predictors, the county name and the parcel use class (filtered to only be ‘SINGLE FAMILY’ or ‘CONDOMINIUMS‘). By default, HeavyML only one-hot encodes the top-10 values, measured by frequency, for a categorical variable, but given there are 67 counties in Florida, we can specify that we want to encode more by using the CAT_TOP_K option). Also note the use of the CREATE OR REPLACE clause, which will allow us to overwrite the previously stored model.

Now we can run EVALUATE MODEL again, noting that the inclusion of the two categorical variables has significantly improved the model accuracy.

Not bad, with the inclusion of a few simple variables we are now up to explaining 27% of the variance. To do better though, we need to try something more powerful, in this case tree-based models.

Random Forest to the Rescue

HeavyML natively supports three tree-based regression models, Decision Tree, Gradient Boosted Tree, and Random Forest models. Here, we will focus on Random Forest models as they are not only powerful but provide useful explanatory info via a variable importance metric that indicates which predictor variables provide the most explanatory power.

Explaining random forest models is beyond the scope of this blog post (for a deeper overview, check out this helpful tutorial), but in essence they involve the construction of multiple decision trees of if-else statements based on values of the predictor inputs to predict the output variable (sales price here) based on, for regression, the average predicted value from each tree.

Creating a random forest model in HeavyML is as simple as taking our previous CREATE MODEL statement and changing the model type to RANDOM_FOREST_REG, as seen below:

Creating the random forest model takes a bit longer than the linear regression model due to its additional complexity, but still finishes in a few seconds on my machine, fully using whatever CPU cores are available.

Now we can evaluate the new model using the EVALUATE MODEL command:

So just simply changing our model type increased the variance explained from 27% to nearly 61%!

We can do better still by tweaking our model to increase the number of trees used in the model. By default, HeavyML builds random forests of 10 trees, but let’s increase this number to 100 by specifying the NUM_TREES option(There are a number of other options as well, please see our docs for more information).

Very nice, so simply increasing the size of the random forest bumped up our explained variance to nearly 65%.

We’re not quite done though. One could imagine a number of other variables with explanatory power that might help us build an even more accurate model, some general like the income of the area a parcel is located in and nearby school scores, and others more Florida-specific like distance to the shoreline. Here we’ll focus on the income of the census block group each parcel is located in, and the distance in kilometers from the parcel to the coastline.

Given the Florida parcels data already has a census block group column built-in, it is easy to join to relevant census metadata to get variables like average income. To get the distance to the coastline, we can avail ourselves of HEAVY.AI’s powerful GeoSQL capabilities, by executing a spatial join against the US Tiger coastline dataset as follows:

Without going into details, it is easy to use joins like the above to produce two new explanatory variables in the data, shoreline_distance_km, and census_blk_grp_median_income. In fact, if desired, we can do the joins directly in the CREATE MODEL statement, but it is recommended to use UPDATE clauses to add these variables to the data so that they can be easily reused for not only model training but also inference down-the-line.

Now let’s run the model with the two additional predictor variables, shoreline_distance_km and census_blk_grp_median_income:

And now to evaluate it:

Improving significantly upon the previous results, the model now is now explaining three-quarters of the variance of sales price. One can imagine by adding additional variables like school, walkability, and crime scores and by tweaking the hyperparameters of the random forest model (or perhaps trying a boosted tree model), we could improve this even further.

We can get a sense of the explanatory power of the model by running the random_forest_reg_var_importance table function and sorting by importance_score, showing here the Mean Decrease in Accuracy (MDA) scaled by standard deviation (When creating random forest models, you can set var_importance_metric in the WITH options to one of ‘NONE’, ‘MDI’, (Mean Decrease in Impurity, or Gini Score) ‘MDA’, or ‘MDA_SCALED’)

Here we can see that a parcel’s presence in Walton County (in the Northwest of Florida) has the most explanatory power, followed by the structure square footage, parcel acreage, ‘SINGLE FAMILY’ status of the parcel use class, effective year built, and the median income of the parcel’s census block group.

Model Inference

As our last step in this workflow tour in HeavyML, let’s turn our attention now to putting our newly created model to use for interactive prediction.

Using a model for inference using HeavyML is simple with the built-in ML_PREDICT operator, which notably runs per-row for easy use in SQL or in Immerse dashboards. ML_PREDICT is also GPU-accelerated for maximum performance, meaning that the tree inference will run across whatever GPUs are available on your machine, falling back to CPU if no GPUs are present. The syntax is as follows:

Let’s use this in a custom measure in an Immerse dashboard to compute the percentage error from the actual sales price, helpful in spotting anomalies (which could represent market mis-pricings and investment opportunities).

To do this, we’ll change the Immerse choropleth color measure from the previous value showing the parcel use class to a new custom measure capturing the percentage error of the predicted sales price versus the actual sales price for a parcel (filtering to only look at Single-Family homes and condos between $10K and $10M).

We get the resulting map showing percentage error, here zooming into the Miami region. Brighter (more yellow) values represent areas in which the model over-predicted the price, darker/purple areas where it under-predicted the price.

As you can see, there are still clusters of over and under-prediction, suggesting that there are still some components of parcel location that could be exploited for additional predictive power with further feature engineering work.

We can also use the raw output of the model to “fill-in” estimated property values for the majority of data that doesn’t have a a sales price attached to it simply by removing the portion of the above measure that normalizes by actual sales price, i.e. simply making the measure:

As evident from the map, certain areas like Kenall and parcels directly on the beach are predicted to have higher prices. Note that it is simple in Immerse to look at prediction trends for the entire state as well as individual neighborhoods, just zoom in and out on the map!

Conclusion

We’re just scratching the surface in terms of ways you can use the new HeavyML capabilities of our platform, but hopefully the example above was enough to get you excited about your own potential use cases. As always, you can try out the power of the HEAVY.AI platform for yourself with our free community edition, HEAVY.AI Free, or by requesting a conversation with one of our engineering team members.