End-to-End Machine Learning with GOAI

Download HEAVY.AI Free, a full-featured version available for use at no cost.

GET FREE LICENSEIn May 2017, MapD along with H2O.ai and Continuum Analytics announced the GPU Open Analytics Initiative (GOAI), with the goal of accelerating end-to-end analytics and machine learning on GPUs. Adoption of GPUs for general purpose computing is a computing revolution driven by NVIDIA’s hardware innovations.

The first project of the initiative was to develop a GPU Data Frame (GDF), providing a mechanism for different processes on the GPU to interchange data more efficiently. We chose to build the GDF on the memory format of Apache Arrow, a widely-adopted framework for in-memory analytics. By adopting an existing standard, it is easier to tie in the GDF with the ecosystem and infrastructure already built around Arrow, allowing MapD and others in GOAI to leverage existing features like Parquet-to-Arrow conversion.

While GPUs can provide 100x more processing cores and 20x greater memory bandwidth than CPUs, systems and platforms are unable to harness these disruptive performance gains because they remain isolated from each other. The initial GOAI prototype integrated the GDF to allow seamless passing of data among processes running on the same GPUs and was shown to provide significant speedups (i.e. 100+X) compared to the status quo of sending data back to the CPU and over the network.

Unveiling a MapD/H2O.ai collaboration

MapD and H2O.ai are very excited to share new developments with the GOAI community. We have created a working prototype of end-to-end machine learning in the MapD Core analytic database, MapD Immerse visual analytics client, Continuum Analytics’ Python API and H2O.ai. The net result enables lightning-fast interactive data exploration and analysis, feature selection, model training, and model validation by virtue of avoiding any serialization overhead when moving data between processes. We will be debuting the MapD & H2O.ai demo on a 1.1 billion record U.S. mortgage dataset at Strata Data Conference NY.

We were able to transform the process of machine learning into an interactive experience by outputting MapD query results into a GPU Data Frame (GDF) and piping them directly to Anaconda and H2O.ai for further processing. Using the GDF, data scientists can use MapD for feature selection and efficient pre-processing, and then feed data into H2O.ai’s machine learning pipelines.

We will demo how you can use MapD and H2O.ai to visualize billions of rows of mortgage data, and show you how you can do feature engineering on the large dataset interactively. While the machine learning algorithms themselves typically get most of the attention, the data science workflow around exploring data, feature engineering, and iterative model training usually takes most of a data scientist’s time. By using GPUs to accelerate all of the above, a data scientist can work significantly faster and test more hypotheses than previously possible.

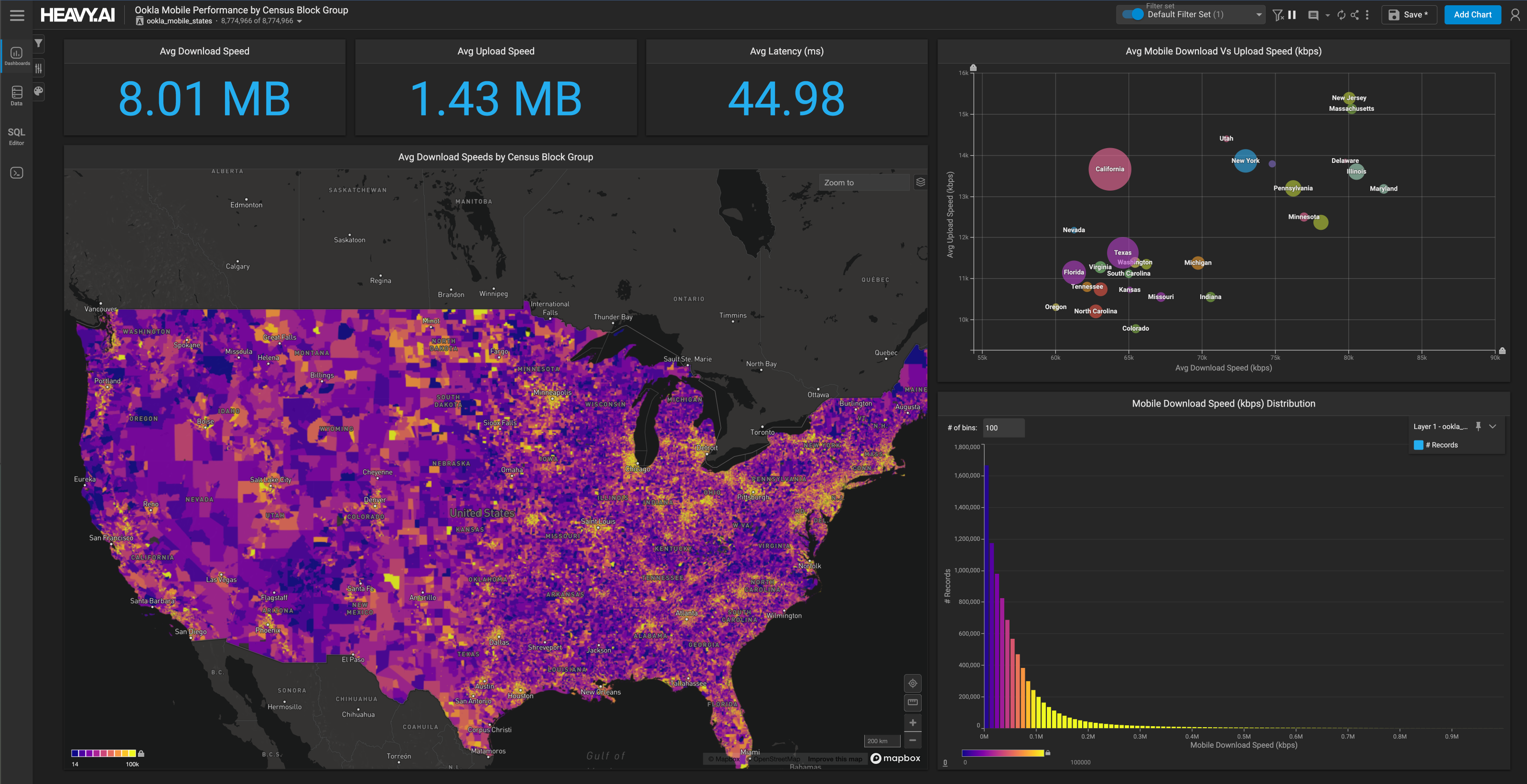

The first step is for you to explore and discover features in the large dataset. Dashboards in MapD Immerse are dynamic, contextual, and highly visual by virtue of being powered by the lightning-fast GPU-accelerated MapD Core analytic database. This enables users who have the best understanding of their data to extract both business-driven and data-driven features. As you can see below, the highly interactive and visual nature of data exploration in MapD Immerse makes the feature selection process intuitive. The charts are linked to raw data so users can drill-down to verify their hypotheses.

After selecting features, the user can configure the parameters of the machine learning algorithm and click the train button. Here we are kicking off an H2O.ai GPU-accelerated Generalized Linear Model (GLM) regression.

The accuracy of the model is determined by its RMSE (Root Mean Square Error). In case of a high RMSE value, the user can tweak the features and parameters to reduce the RMSE (ideally to a number less than one).

The user then executes the model on a new dataset to predict a future outcome. You can then create a scatterplot of actual vs. predicted to see how the model performs in real-world scenarios.

As you can see, building an accurate predictive model is a highly iterative process that benefits from being able to visually explore the data at interactive speeds.The end-to-end machine learning powered by GOAI helps to:

- Bring the processing to the data, lowering time spent marshaling and copying data between processes

- Enable data scientists to interactively discover both business-specific and data-specific features

- Boost performance and productivity by leveraging parallel processing on GPUs

- Accelerate feature engineering, model training, model scoring, and prediction using GDFs

A revolution is taking place in the GPU software stack, driven by NVIDIA’s hardware innovations. That revolution is transforming the fields of analytics, machine learning and deep learning. GDFs break down the silos between systems and software to enable interactive data exploration, feature engineering, model training and model scoring. Of course, what we are unveiling today represents just the first step in building a vibrant GPU analytics ecosystem. Please stay tuned as we continue to refine the infrastructure to enable end-to-end GPU analytics. In the meantime, we’d love your feedback. We made this an open-source project so that many bright minds can help building it!

We're happy to share information specifically for your business needs, to help with your research in harnessing the power of GPUs. If you are at Strata Data Conference NY, please visit us at the MapD booth (#839) to see a live demo or hear MapD Founder & CEO, Todd Mostak's session on Wed (9/27) at 1:15pm in 1E 17 about how to Accelerate your analytics with a GPU Data Frame

Strata Link.